Hi

I want to know some detail information about backward propagation.

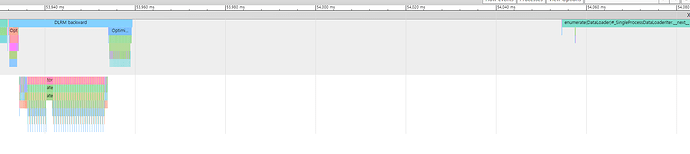

So i use autograd.profiler and see my result trace.

i found that the empty space until the start of the next iteration is very large.

To find the cause, i checked the time for optimizer.step().

In profiler, optimizer step spent 5ms.

But i write my code,

torch.cuda.synchronize()

start = time.time()

optimizer.step()

torch.cuda.synchronize()

end = time.time()

the results is 100ms.

why profiler does not catch empty space?