## Motivation

Many fields manipulate collections of Tensors of different shapes…. For example, paragraphs of text, images of different sizes or audio files of different lengths. We don't have a first class abstraction that enables the concurrent manipulation of collections of this type of data. We further often need to batch arbitrary data and operations for efficiency.

## Definition

A NestedTensor, just like a torch.Tensor, has a dtype, layout, device and dimension.

In general, there are two cases for which NestedTensors provide computational representations.

**Lists of Tensors**

Each Tensor constituent of the list it represents, if any, must be of its dtype, layout and device. The dimension of a constituent Tensor must be one less than the dimension of the NestedTensor. An empty list of Tensors yields a NestedTensor of dimension one.

**Lists of NestedTensors**

Each constituent NestedTensor must be of its dtype, layout and device. The dimension of a constituent NestedTensor must be one less than the dimension of the NestedTensor.

You cannot have a list of Tensors and NestedTensors, that is, you cannot mix them.

## Goals and current code

**Convenience:** Remove tedious manual workarounds

Sometimes research can be unblocked by or inspired by convenient abstractions. New code may be built on top of NestedTensors if it's general enough and easy enough to use (discoverable). We want to make sure that we solve existing problems well, but also want it to be general enough to inspire new work.

```

# This does a lot of work to use our awkward packed sequence abstraction

def forward(self, sent_tuple):

# sent_len: [len_1, ..., len_bsize]

# sent: (seqlen x bsize x worddim)

sent, sent_len = sent_tuple

# Sort by length (keep idx)

sent_len_sorted, idx_sort = np.sort(sent_len)[::-1], np.argsort(-sent_len)

sent_len_sorted = sent_len_sorted.copy()

idx_unsort = np.argsort(idx_sort)

idx_sort = torch.from_numpy(idx_sort).cuda() if self.is_cuda() \

else torch.from_numpy(idx_sort)

sent = sent.index_select(1, idx_sort)

# Handling padding in Recurrent Networks

sent_packed = nn.utils.rnn.pack_padded_sequence(sent, sent_len_sorted)

sent_output = self.enc_lstm(sent_packed)[0] # seqlen x batch x 2*nhid

sent_output = nn.utils.rnn.pad_packed_sequence(sent_output)[0]

# Un-sort by length

idx_unsort = torch.from_numpy(idx_unsort).cuda() if self.is_cuda() \

else torch.from_numpy(idx_unsort)

sent_output = sent_output.index_select(1, idx_unsort)

```

**Correctness:** Abstract the subtle details

Many users run into subtle issues when padding and masking manually. We can hide these details and provide an implementation that deals with all these subtleties.

Example 1

```

# Currently users have to worry about setting their masked values to

# the correct number to not influence reductions such as max.

def neginf(dtype):

"""Returns a representable finite number near -inf for a dtype."""

if dtype is torch.float16:

return -NEAR_INF_FP16

else:

return -NEAR_INF

[...]

outputs_of_interest = embedding_layer[:, 1:, :]

# The user carries around a mask to indicate regions that aren't relevant

# This mask needs to be updated for each layer.

mask = (~attention_mask[:, 1:]).type(dtype).unsqueeze(2) * neginf(dtype)

sumed_embeddings = torch.sum(outputs_of_interest * mask, dim=1)

nb_elems = torch.sum(attention_mask[:, 1:].float(), dim=1).unsqueeze(1)

embeddings = sumed_embeddings / nb_elems

# This is a very complicated way of getting torch.mean(outputs_of_interest, dim=1)

# for variably sized entries.

[...]

```

Example 2

```

tensor *= mask.unsqueeze(-1).float()

for i in range(self.n_layers):

# The user manually carries around a mask to indicate regions

# that aren't relevant. Each layer has custom mask support

# which the user had to implement

tensor = self.layers[i](tensor, mask)

# It's very easy to write -1e20 instead of 1e-20, which makes a big difference

divisor = mask.float().sum(dim=1).unsqueeze(-1).clamp(min=1e-20)

output = tensor.sum(dim=1) / divisor

```

**Performance:** Take advantage of sparsity and ease batching

There are obvious gains in writing specialized kernels. RNNs already take advantage of that via packed sequences, which was introduce by a vendor library. We can take advantage of this more broadly via a centralized abstraction. It also allows us to replace for loops or other obviously inefficient constructs.

```

GeneralizedRCNNTransform.forward(self, images, targets=None):

# If the images are small this likely causes resource underutilization.

for i in range(len(images)):

image = images[i]

target = targets[i] if [...]

[...]

image = self.normalize(image)

image, target = self.resize(image, target)

images[i] = image

if targets is not None:

targets[i] = target

image_sizes = [img.shape[-2:] for img in images]

images = self.batch_images(images)

image_list = ImageList(images, image_sizes)

return image_list, targets

```

## Scope of this document

For this first version we're treating NestedTensors effectively as a static container (akin to Python tuples) of its constituent Tensors. That means, any operation for explicit shape manipulation, such as broadcasting or select, are not part of this discussion and will be brought up as part of 0.0.2.

## Python repr() string representation

A NestedTensor of two one-dimensional Tensors of length three and four.

```

nestedtensor([

tensor([8, 1, 3, 4]),

tensor([5, 0, 9])

])

```

A NestedTensor of NestedTensors each containing Tensors of different lengths.

```

nestedtensor([

nestedtensor([

tensor([8, 1, 3, 4]),

tensor([5, 0, 9])

]),

nestedtensor([

tensor([2, 4]),

tensor([5, 0, 9, 10, 2])

])

])

```

## Construction/Conversion

For this discussion we use the Python frontend for notation.

### Construction

```

$ a = [torch.rand(2, 3), torch.rand(4, 5)]

$ b = torch.nestedtensor(a)

```

The constructor also accepts tuples.

```

$ a = (torch.nestedtensor([torch.rand(2, 3)]),

torch.nestedtensor([torch.rand(4, 5)]))

$ b = torch.nestedtensor(a)

```

The level of nesting is inferred from the input. The constructor always copies. Whatever you pass into the constructor will share no data with what the constructor returns. This matches torch.tensor's behavior.

If given a NestedTensor or Tensor it will return a detached copy, which is consistent with the behavior of torch.tensor. Remember that you cannot mix Tensors and NestedTensors within a given list.

### Conversion/unbind()

Converting from NestedTensors to nested tuples

```

$ a = [ \

[torch.rand(2, 3), torch.rand(4, 5)], \

[torch.rand(6, 7)] \

]

$ b = torch.nestedtensor(a)

$ b1 = b.unbind() # Tuple of 2 NestedTensors

$ b2 = b1[0].unbind() # Tuple of 2 Tensors

```

A user can retrieve the constituent Tensors via unbind. Unbind is currently used by torch to turn Tensors into tuples of Tensors. Unbind always returns a tuple of views. We do not (yet support a dimension argument to unbind for NestedTensors, because it forces us to argue about shape.

## Operator generalization

Operator generalization is the process of expanding input constraints and semantics to NestedTensors. Note that this also includes features such as aliasing (creating views). Every Tensor operator, if meaningfully generalizable, may be implemented or must be flagged as not implemented and raise an exception.

We directly add support for NestedTensors to operations in torch and there will be no specialized package.

As a general policy, we will be conservative in the operations we support and introduce them slowly for this more general case. In some cases the advanced semantics are obvious, in others they will be heavily driven by potential applications and current issues.

### Generalization strategy

We can find that many operations of torch.Tensor can be expressed as a combination of others. We can minimize the amount of work we need to do in order to get good operator coverage by identifying core operations and generalizing them. This will then also act as a forcing function to yield sane and consistent semantics, because the combination of these core operations will also imply combined semantics and input constraints.

## Core operations

### nested_size()

As motivation let us consider as an example of two paragraphs of word ids.

```

$ words = \

[

[

tensor([0, 2, 4, 6]),

tensor([1, 3, 5])

],

[

tensor([7, 9]),

tensor([11]),

tensor([13, 15, 17, 19]),

]

]

$ words = torch.nestedtensor(words)

$ words.nested_size()

(

(

torch.Size([4]),

torch.Size([3])

),

(

torch.Size([2]),

torch.Size([1]),

torch.Size([4])

)

)

```

nested_size() returns a container of torch.Sizes just like NestedTensor is a container of torch.Tensors. We could also introduce a torch.NestedSize for debatable benefit, but increased consistency.

Note: Someone might call a dimension ragged or batched if for a given dim `size(dim)` doesn't match across all Tensor constituents. We say that this dimension is heterogeneous.

### nested_dim()

```

$ words.nested_dim()

(

(1, 1),

(1, 1, 1)

)

```

Similar to nested_size, nested_dim returns a nested representation. Due to the constraints on the constituent Tensors all numbers will be equal, but this might change in the future if we relax some of them.

## Tensor-wise ops

### Definition

Let's define `z = f(Tensor x_1, Tensor x_2,..., Tensor x_n)` to be an operation on n Tensors. Let's say data is a list of n valid inputs to the constructor.

```

# data is a list that represents inputs to our constructors

# Example:

# data = [(torch.rand(2, 3), torch.rand(5, 4)), (torch.rand(7, 9),)]

inputs = [torch.nestedtensor(data_i) for data_i in data]

def tw_f(inputs, f):

result = []

if isinstance(inputs[0], torch.Tensor):

return f(*inputs)

else:

result = []

# Turn this list of NestedTensors into a list of NestedTensor constituents

unbound_inputs = [input.unbind() for input in inputs]

for i in range(len(inputs[0])):

result.append(tw_f([unbound_input[i] for unbound_input in unbound_inputs], f))

return torch.nestedtensor(result)

result = tw_f(inputs, f)

```

We call an operator *tw(f) to be tensor-wise with respect to f* if it is, semantically, implemented via above code.

**We impose two input constraints for this operation (for now).**

1. The resulting list of Tensors or NestedTensors (called result in the above snippet) need to form a valid NestedTensor. This typically requires that all n input NestedTensors be of the same dtype, layout and dimension for that construction to succeed. We make this a strict requirement.

2 Each NestedTensor constructed from data_i must have the same nesting structure. This means nested_dim must match across all n input NestedTensors.

### Example: torch.mm

```

$ a = torch.nestedtensor(

[

[

rand(3, 5)

],

[

rand(1, 8),

rand(7, 3)

]

])

$ b = torch.nestedtensor(

[

[

rand(5, 3)

],

[

rand(8, 2),

rand(3, 4)

]

])

$ torch.matmul(a, b).nested_size()

(

(

torch.Size([3, 3]),

),

(

torch.Size([1, 2]),

torch.Size([7, 4])

)

)

```

We can think of the NestedTensor version of mm as the tensor-wise operation based on mm.

**List of supported tensor-wise operations**

What follows is a list of categories of operations we consider tensor-wise. All of these operations may be implemented as part of 0.0.1. Some of these stand in place for Python class methods (such as __eq__) and may also double for a module level function, such as torch.add, and method function, such as torch.Tensor.add_.

1. Unary point-wise operations such as add, cos and digamma

2. Binary operations such as add and cmul, but limited to working without Tensor broadcasting

2. mm, mv, addmm and other BLAS operations

4. spectral operations such as fft and ifft

5. specific nn layers such as Embedding/EmbeddingBag or RNN

If there is any doubt whether we want to treat these functions as tensor-wise in the future, we should not implement them as such for now.

Note that we explicitly punt on broadcasting semantics within this RFC simply because they can be viewed as implicit shape manipulation of a NestedTensor (even if it's only of a Tensor constituent) and we want to discuss that separately.

As set of heuristics for inclusion in this list is

1. The operator is independent of the sizes of the Tensor inputs (e.g. torch.cos)

2. The operator does not care about the sizes of the Tensor inputs as long as they match (e.g. torch.add)

3. The operator currently does not have batching or broadcasting semantics (e.g. torch.mm or torch.fft)

4. The operator currently has ad-hoc or awkward variable length input support that can be replaced by NestedTensors

## Example applications

### Embedding

```

def embedding_bag(

input,

weight,

offsets=None,

max_norm=None, norm_type=2,

scale_grad_by_freq=False, mode='mean',

sparse=False, per_sample_weights=None)

```

Currently, if input is of size (B, N) it will be treated as B sequences of length N. The offsets are ignored. The function returns a Tensor of size (B, em), where em is the embedding dimension. If input is 1d, then offsets is used to slice up the input vector. The offsets are currently used to emulate variable length sequences.

Using NestedTensor we can simply do away with offsets. The input is a 2d NestedTensor of size ((n_1,), (n_2,), ..., (n_k,)) and the output will be of size ((em,), (em,), ..., (em,)). This is the tensor-wise operation based on EmbeddingBag applied to a (1, N) Tensor. If there are B Tensor constituents in the input NestedTensor we will simply return a torch.Tensor of size (B, em).

### RNNs

A NestedTensor of 1-dimensional Tensors can be converted into a PackedSequence. RNN functions accept PackedSequences. As such, we can treat NestedTensors as a replacement for PackedSequence with the additional benefits of supporting empty Tensor constituents and entries not sorted by length. PackedSequence is another example of ad-hoc support for variable length sequences, however via a more sophisticated, separate class.

### cross_entropy

```

cross_entropy(

input,

target,

weight=None,

size_average=None,

ignore_index=-100,

reduce=None,

reduction='mean')

```

cross_entropy has implicit support for variable length data via the ignore_index flag. We can implement support for variable length inputs explicitly via NestedTensors, if we treat it as a tensor-wise operation based on cross_entropy for a single sequence.

## Implementation

### List of NestedTensors

Lists of NestedTensors can be implemented by adding metadata on top of a NestedTensor of Tensors. As such they can be implemented separately or even concurrently with future work. We may also restrict ourselves to a few levels of nesting to ease implementation.

### List of Tensors

The simplest implementation implements a NestedTensor of Tensors via a list of Tensors. Operations are implemented via for-loops using existing implementations or via simple extensions.

### List of Tensors: Python list

**Advantages**

- Simplest implementation

- May benefit from for-loop JIT optimizations

**Disadvantages**

- Does not take advantage of inherent parallelism. Very slow.

- Really only useful as a prototype

### Lists of Tensors: Data tensor plus mask tensor

We may start out with a dense tensor and a mask tensor. For performance concerns we may raise a warning on low load factors.

**Advantages**

- All pointwise n-ary operations are immediately implemented by applying them to the data tensor.

- Many torch.nn operations are implemented in torch methods and functions, which yields immediate coverage.

- Many operations can be implemented in terms of others, e.g. BLAS in terms of matmul and index_select via masked_select.

- Autograd support comes out of the box, since this can be written was a wrapper.

- In many cases an existing user implementation uses padding plus masking, so a replacement doesn't yield a performance regression, but surely makes it easier for the user and more widely available.

- Any dtype, layout and device is immediately supported.

- Vendor libraries that require torch.Tensor for high performance operations such as matrix multiply still can be used.

- Provides a central location for various existing implementations that use padding and masking.

**Disadvantages**

- Doesn't take advantage of sparsity and adds a lot of memory overhead due to the mask.

- Cannot support a relaxation of the dtype, layout or device constraints, because there has to be one underlying data Tensor.

### List of Tensors: at::TensorList

**Advantages**

- [at::TensorList](https://github.com/pytorch/pytorch/blob/65b00aa5972e23b2a70aa60dec5125671a3d7153/aten/src/ATen/core/Type.h#L39) is a C++ structure already in place and used

- We can use TensorList as a dispatch mechanism to specialized kernels for a vector of Tensors (as a list of views) and without moving any data around.

- Can use nested parallelism or other constructs to parallelize across constituents as a default implementation.

**Disadvantages**

- TensorList requires the allocation of a lot of Tensor structs, which can come with allocation overhead.

- Does not allow to (easily) treat underlying memory as a single flat buffer, which might prohibit using existing operations without significant changes or a (parallelized) for-loop

### List of Tensors: Dense Packed data layout

Eventually we will want to use a packed data layout to benefit from sparsity. For this we discuss two cases.

1. A NestedTensor of Tensors

2. A NestedTensor of NestedTensors

We will restrict ourselves to NestedTensors with dense layout, regular C dtype and allocated on a device with pointer arithmetic (e.g. CPU or CUDA GPU).

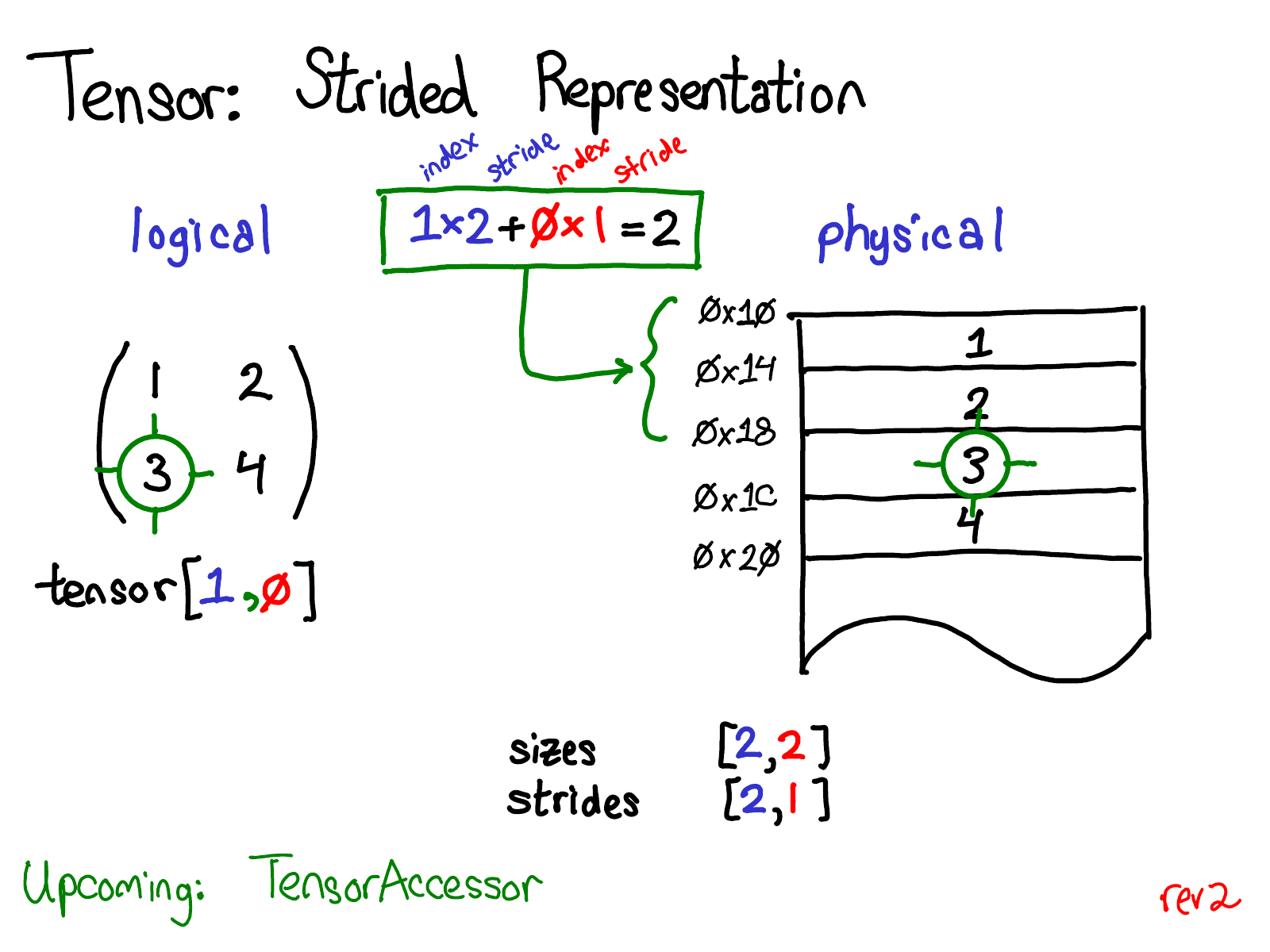

See above for a visual refresher on our memory layout for dense Tensors. For more information and credit for this picture go to http://blog.ezyang.com/2019/05/pytorch-internals/.

**Case 1:**

Let's consider a NestedTensor called t of the following form.

```

nestedtensor([

tensor([...]),

tensor([...]),

...

tensor([...])

])

```

Let's say there are K Tensors and let's call the kth Tensor in this sequence t_k. We can characterize its size by `(n_k^1, n_k^2, ..., n_k^N)` and strides `(s_k^1, s_k^2, ..., s_k^N)`. In concordance with torch.Tensor all strides are non-negative. Call the pointer to the underlying memory data_k. Each logical datapoint maps to a datapoint in the corresponding memory, but two logical data points may map to the same if e.g. the strides are 0.

For each logical element of t_k there exists a unique sequences of indices `(i_k^1, i_k^2, ..., i_k^N)` that maps to the underlying memory location via `data_k + \sum_{n = 1}^{N} i_k^n * s_k^n`. The maximum offset is `s_k^0 = \sum_{i = 1}^{N} i_k^n * s_k^n`.

Pick one logical element x of t. WLOG we can assume x is a part of t_k. Then we can map to the underlying data via `data_k + \sum_{n = 1}^{N} i_k^n * s_k^n` and associate index `(k, i_k^1, i_k^2, ..., i_k^N)`.

Now ,to have a packed data representation we can, during construction or other times, union the memory of each data_k into a single contiguous memory location pointed to by data. Then we can define a mapping from each logical element with index `(k, i_k^1, i_k^2, ..., i_k^N)` via `data + \sum_{i = 0}^{k - 1} s_i^0 + \sum_{n = 1}^{N} i_k^n * s_k^n` to the underlying data.

In conclusion, we simply unify the underlying data into a single memory region. We only need K further values via `s_0^k` to store the offsets and implement this memory layout. This also makes it much easier to provide views and adopt current implementations for regular Tensors. An initial implementation might not unify the data and simply stores a vector of Tensors with metadata to represent the nesting on top.

**Case 2:**

Consider a NestedTensor of the following form.

```

nestedtensor([

nestedtensor([...]),

nestedtensor([...]),

...

nestedtensor([...])

])

```

Let's say there are K NestedTensors and let's call the kth NestedTensor in this sequence nt_k. We can then proceed just as we did with our Tensors and introduce a new index i_k^0 with corresponding offset s_k^0 that equals the maximum offset of each constituent.

**A note on metadata**

However, note that adding another level of nesting due to additional NestedTensors does not change the amount of underlying allocated memory. It simply increases the amount of metadata which is used to represent the structure on top of the collection of Tensors. We might, in the future, want to combat this by introducing homogeneity annotations per dimension (e.g., nested_size() must return the same number for each torch.Size at a given dimension). This will allow us to store a single stride instead of one stride per constituent and might also make it easier to leverage existing kernels. We can also use this to compress the metadata we need to store for the Tensor constituents.

**Advantages**

- Due to our homogeneity constraint on dtype, device and layout much of the current code should be easy to extend. In particular, TensorIterators should be easy to extend for pointwise operations with additional striding information (vector of vector of strides)

- Might concurrently resolve discussions around blocked memory supports (vector of vector of strides)

**Disadvantages**

- Requires deep integration and possibly extension of core structures

- We can run into issues with allocation overhead if we store this as a std::vector of Tensors.

- Some vendor library operations require regular torch.Tensors. High performance operations such as matrix multiplication might require new kernels, but this may be eased with homogeneity annotations.

## Next steps

As next steps we can implement a basic prototype located in torch.prototype.nestedtensor. It'll be a Python wrapper around two torch.Tensors, one data Tensor and one mask Tensor. It allows construction from a list of torch.Tensors, i.e. no nesting. Dispatch is implemented via monkey-patching torch on import of torch.prototype.nestedtensor.torch and using isinstance to distinguish between torch.Tensor and torch.NestedTensor. It also comes with a test suite within test/test_nestedtensor.py.