I have trained a deep learning model using unet architecture in order to segment the nuclei in python and pytorch. I would like to load this pretrained model and make prediction in C++. For this reason, I obtained trace file(with pt extension). Then, I have run this code:

int main(int argc, const char* argv[]) {

Mat image;

image = imread("C:/Users/Sercan/PycharmProjects/samplepyTorch/test_2.png", CV_LOAD_IMAGE_COLOR);

std::shared_ptr<torch::jit::script::Module> module = torch::jit::load("C:/Users/Sercan/PycharmProjects/samplepyTorch/epistroma_unet_best_model_trace.pt");

module->to(torch::kCUDA);

std::vector<int64_t> sizes = { 1, 3, image.rows, image.cols };

torch::TensorOptions options(torch::ScalarType::Byte);

torch::Tensor tensor_image = torch::from_blob(image.data, torch::IntList(sizes), options);

tensor_image = tensor_image.toType(torch::kFloat);

auto result = module->forward({ tensor_image.to(at::kCUDA) }).toTensor();

result = result.squeeze().cpu();

result = at::sigmoid(result);

cv::Mat img_out(image.rows, image.cols, CV_32F, result.data<float>());

cv::imwrite("img_out.png", img_out);

}

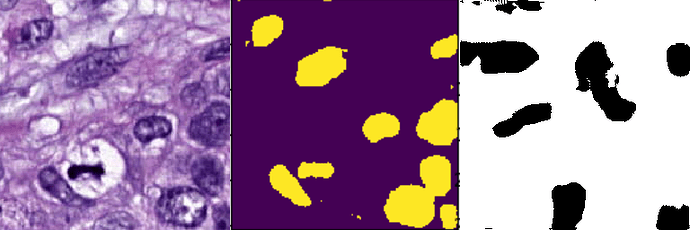

Image outputs ( First image: test image, Second image: Python prediction result, Third image: C++ prediction result):

As you see, C++ prediction output is not similar to python prediction output. Could you offer a solution to fix this problem?