I am studying the nvidia torch matmul function.

### variable creation

a = torch.randn(size=(1,128,3),dtype=torch.float32).to(cuda)

b = torch.randn(size=(1,3,32),dtype=torch.float32).to(cuda)

### execution

c = torch.matmul(a,b)

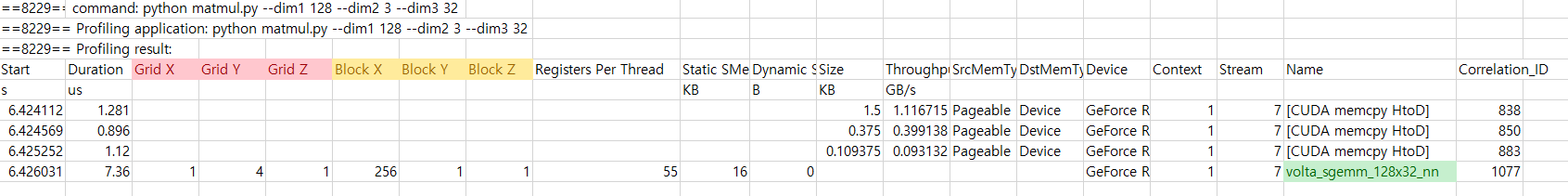

I profiled this code using pyprof and this gives me the result below.

I cannot understand many things in there.

- what is sgemm_128_32 means? I see the ‘s’ in sgemm stands for single precision and ‘gemm’ means general matrix multiplication. But i don’t know the 128_32 means. My output matrix dimension is 128 by 32. But I know that cutlass optimizes the sgemm using outer product. (i will give you the link, ref 1) Actually i cannot understand the link.

(1)Does 128_32 means simply the output matrix’s dimension? (2)Is there any way how my output matrix(c, in my code) is actually calculated? (for example, there are total 128*32 threads. And each thread is responsible for one output element using inner product way)

- Why the Grid and Block have 3 dimension each and how the grid and block is used for sgemm_128_32? Grid consists of x, y, z. And Block consists of x, y, z. (1) Why do you need 3 dimension? I see that (in the picture above) block X has 256 thread. (2) is this true? And Grid Y is 4. so this means that there is 4 blocks in Grid Y. (3) is this true?

- By using that pyprof result, can i figure out how many SMs are used? how many warps are activated in that SM?

Thank you.

ref 1 : https://developer.nvidia.com/blog/cutlass-linear-algebra-cuda/