I’m trying to make a custom vgg16 and the architecture is as follows:

class CustomVGG16(torch.nn.Module):

def __init__(self):

super(CustomVGG16, self).__init__()

self.vgg = torchvision.models.vgg16_bn(pretrained = True)

self.vgg.classifier[-1].out_features = 25

self.softmax = torch.nn.Softmax()

def forward(self, x):

x = self.vgg(x)

x = self.softmax(x)

return x

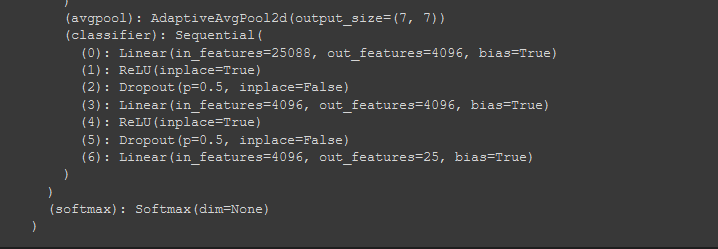

As it can be seen that I have changed the output features in last layer to 25 from 1000. as it is verified by the model architecture:

So the model is supposed to give 25 outputs ( n x 25 ) right?

But on the following, outputs = model(inputs)

the shape of outputs is (6, 1000). 6 because of batch size.

but as mentioned earlier, I changed this 1000 to 25, so this should be (6, 25).

what could be the reason?

my training loop:

running_loss = 0.0

accuracy = []

val_accuracy = []

model = model.to(device)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 20, gamma = 0.1)

for ep in range(epoch):

correct = 0

total = 0

start_time = time.time()

for i, data in enumerate(train_loader):

inputs, labels = data

inputs, labels = inputs.to(device), labels.to(device)

optimizer.zero_grad()

outputs = model(inputs)

print(inputs.shape)

loss = criterion(labels, outputs)

loss.backward()

optimizer.step()

running_loss += loss.item()

correct += (outputs.argmax(1) == labels).float().sum()

total += len(labels)

accuracy_local = (correct / total)*100

accuracy_local = accuracy_local.data.cpu().numpy()

accuracy.append(accuracy_local)

val_acc = valid(model,criterion,optimizer,val_loader)

val_accuracy.append(val_acc)

scheduler.step()

print('EPOCH: {:} Accuracy: {:.2f}% Val_Accuracy: {:.2f}% Time: {:.2f} seconds'.format(ep, accuracy_local, val_acc, time.time() - start_time))

criterion is bcewithlogitsloss