Hi Pytorch Gurus,

I found a similar question, but it was left unanswered: Training an ensemble model with and without saperately

I believe I have a similar question.

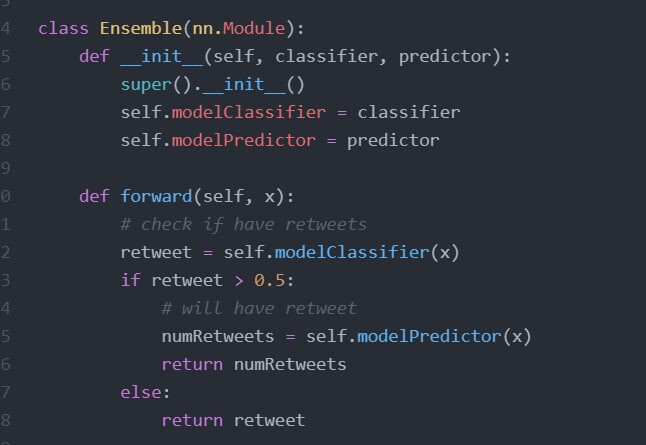

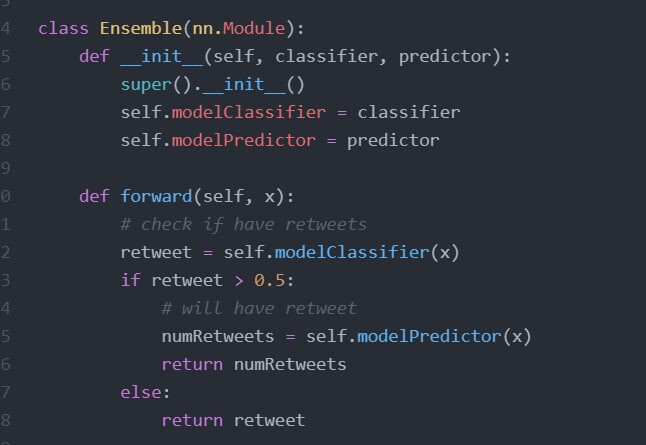

I would like to ensemble 2 models together which I have trained. The classifier will be in charged of predicting whether there will be retweets or not (basically a 0 or 1). The predictor was trained only with retweets data and will only be used if the classifier model returns a 1.

However, I have some issues with the forward function of this “Ensemble” Model, due to batch size problems. The workaround of setting batch_size=1 works, but this would mean it is very inefficient and slow. Could I seek for advice and suggestions how to maintain a large batch_size?

Without a batch_size of 1, it gives an ambiguity error: “RuntimeError: bool value of Tensor with more than one value is ambiguous”

As the error message claims, you cannot use an if condition on multiple values and would need to split the tensor.

Assuming you want to pass the values matching the condition to self.modelPredictor and return the others, you could apply this workflow:

# output from previous layer

x = torch.randn(10, 1)

# create condition

idx = x > 0.

# split the data based on condition

x_true = x[idx]

x_false = x[~idx]

# check shapes

print(x_true.shape)

> torch.Size([3])

print(x_false.shape)

> torch.Size([7])

# apply special path for True values

out_true = self.modelPredictor(x_true)

# concatenate outputs

out = torch.zeros_like(x)

out[idx] = out_true

out[~idx] = x_false

You might also want to add a few more checks to handle e.g. empty tensors etc.

Hi @ptrblck, thank you for taking the time for your response.

Yes, I am aware of the message error, and I decided to take a short break from the project. After a well rested break, I realised that the solution I am looking for was relatively simple and I have overlooked it.

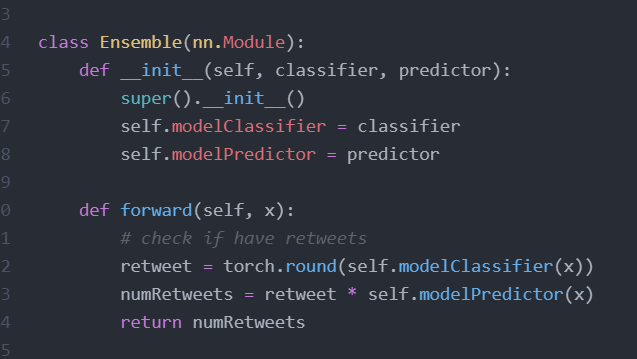

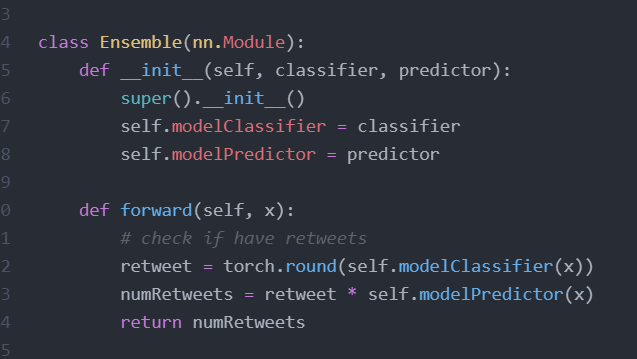

As my main intention was to use the binary classifier to feed into my predictor network, simply rounding the output of the binary classifier followed by multiplying it with the output from my predictor network would yield the answer. This allows for batch_size >1 evaluation as well, which was good enough in my books!