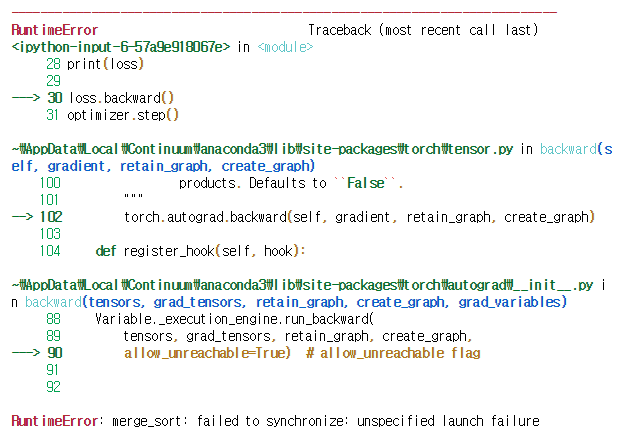

i got this error when i run loss.backward()

is there anybody had this problem before?

my model is:

class modeler(nn.Module):

def init(self, numUsers, numItems, embedding_dim):

super(modeler, self).init()

self.numu = numUsers

self.numi = numItems

self.dim = embedding_dim

self.userEmbed = nn.EmbeddingBag(self.numu, self.dim, mode = ‘mean’)

self.itemEmbed = nn.EmbeddingBag(self.numi, self.dim, mode = ‘mean’)

#self.init_weights()

def init_weights(self):

nn.init.normal_(self.userEmbed.weight.data, mean=0.0, std=0.01)

nn.init.normal_(self.itemEmbed.weight.data, mean=0.0, std=0.01)

def forward(self, u_batch, i_batch, u_i_idx, u_i_ofs, i_u_idx, i_u_ofs):

# Get embedding

u_idx = Variable(torch.LongTensor(range(0, len(u_batch))).cuda())

i_idx = Variable(torch.LongTensor(range(0, len(i_batch))).cuda())

u_emb = self.userEmbed(u_batch, u_idx)

i_emb = self.itemEmbed(i_batch, i_idx)

# Get neighborhood embeddings

u_nei_emb = self.userEmbed(u_i_idx, u_i_ofs)

i_nei_emb = self.itemEmbed(i_u_idx, i_u_ofs)

# Get r_{ui}

trans_vec = u_nei_emb * i_nei_emb

# Distance Regularizer

score = (u_emb + trans_vec - i_emb)**2

reg1 = score.sum()

# Neighborhood Regularizer

reg2 = ((u_emb - u_nei_emb)**2).sum() + ((i_emb - i_nei_emb)**2).sum()

out = torch.sum(score,1)

# Gather regularizers

reg = [reg1, reg2]

return out, reg