Hello,

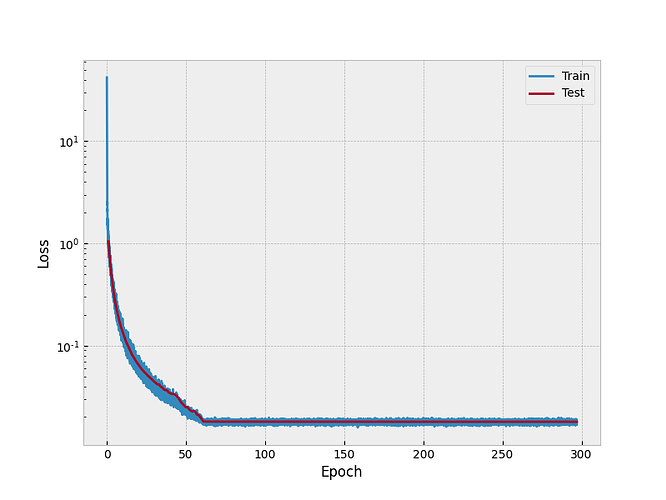

I am using 64x64 images (just to test my model 10000, split as 80%, 10%, 10%) to obtain a vector of 64 elements. I have tried different learning rates, weight decay, different architecture, different normalization of input, even normalization of the target vectors. The best I obtain for the MSE error is in the image below, where the training and validation (despite the legend) are plotted. However, when I compare the output of the model to some vectors in the test set, the result is bad. Here the input is zero centralized and the target vectors are scaled to [-0.2, 0.2].

I tried for a single image and the error goes well down well beyond what is seen in the plot. What can cause this flattening of the errors after a few epochs? I welcome any help . Many thanks.