Yang_Tong

August 30, 2018, 1:49pm

1

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

import numpy as np

Lr_G = 0.0001

Lr_D = 0.0001

G = nn.Sequential(

nn.Linear(100, 128 * 6 * 6),

nn.ReLU(),

nn.UpsamplingBilinear2d(),

nn.Conv2d(in_channels=1, out_channels=128, kernel_size=4),

nn.ReLU(),

nn.UpsamplingBilinear2d(),

nn.Conv2d(in_channels=128, out_channels=64, kernel_size=4),

nn.ReLU(),

nn.Conv2d(in_channels=64, out_channels=3, kernel_size=4),

nn.Tanh()

)

n = np.random.rand(100)

n = torch.tensor(n)

n = n.long()

print(n.type())

n = G(n)

print(n)

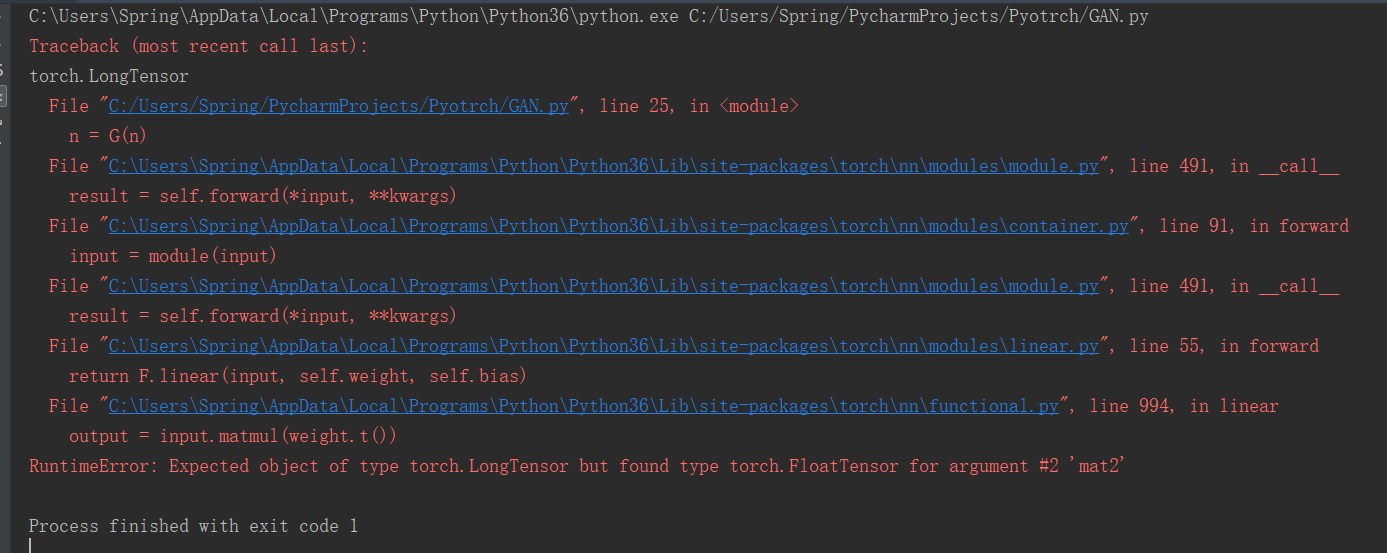

i want to see output, just give a input ,but code broke down ,i wanna know why plz

Yang_Tong

August 30, 2018, 2:00pm

2

in other words , i wanna know input shape and output shape . if you can give me an example i would appreciate you.

You should keep the data in float instead of casting it to long.[batch_size, 128 * 6 * 6] and you are trying to use nn.UpsamlingBilinear2d on it, which expects the input to have the shape [batch_size, channels, h, w].

Here is a small working example:

batch_size = 1

class View(nn.Module):

def __init__(self, size):

super(View, self).__init__()

self.size = size

def forward(self, x):

x = x.view(self.size)

return x

G = nn.Sequential(

nn.Linear(100, 128 * 6 * 6),

nn.ReLU(),

View((batch_size, 128, 6, 6)),

nn.Upsample(size=12),

nn.Conv2d(in_channels=128, out_channels=128, kernel_size=4),

nn.ReLU(),

nn.Upsample(24),

nn.Conv2d(in_channels=128, out_channels=64, kernel_size=4),

nn.ReLU(),

nn.Conv2d(in_channels=64, out_channels=3, kernel_size=4),

nn.Tanh()

)

x = torch.randn(batch_size, 100)

output = G(x)

Yang_Tong

August 31, 2018, 11:11am

4

thank you very much, and i give the key 18 for the second Upsample layer size,and code run.and i learned how to use many kind of layer in pytorch. thanks~