Dear all,

As part of my small project (https://github.com/QuantScientist/PngTorch) I am using the trained models from here GitHub - gnsmrky/pytorch-fast-neural-style-for-web: Make PyTorch fast-neural-style to run inference with ONNX.js in web browsers which I used before with OpenCV in C++ without any issues.

The full example to reproduce the issue is here:

https://github.com/QuantScientist/PngTorch/blob/master/src/example002.cpp

Since my project does not use OpenCV, I am using libpng and there are differences between the resulting image in PyTorch VS traced model in C++. The project is based on CMake and if you clone it all the dependencies are downloaded automatically for you.

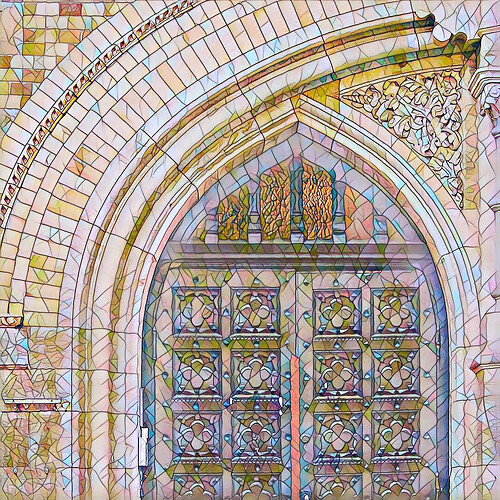

For instance original image:

Original style transfer with the candy.pth:

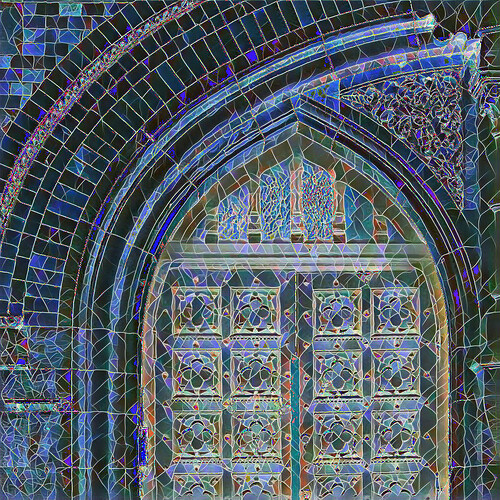

My style transfer with the traced candy.pt (https://github.com/QuantScientist/PngTorch/blob/master/resources/mosaic_cpp.pt):

I am sure the issue is here in https://github.com/QuantScientist/PngTorch/blob/master/include/utils/vision_utils.hpp in these lines:

png::image<png::rgb_pixel> VisionUtils::torchToPng(torch::Tensor &tensor_){

// out_tensor = out_tensor.mul(255).clamp(0, 255).to(torch::kU8);

torch::Tensor tensor = tensor_.squeeze().detach().cpu().permute({1, 2, 0}); // {C,H,W} ===> {H,W,C}

tensor = tensor.mul(255).clamp(0, 255).to(torch::kU8); **// HERE**

// tensor = tensor.mul(0.5).add(0.5).mul(255).clamp(0, 255).to(torch::kU8);

size_t width = tensor.size(1);

size_t height = tensor.size(0);

auto pointer = tensor.data_ptr<unsigned char>();

png::image<png::rgb_pixel> image(width, height);

for (size_t j = 0; j < height; j++){

for (size_t i = 0; i < width; i++){

image[j][i].red = pointer[j * width * 3 + i * 3 + 0];

image[j][i].green = pointer[j * width * 3 + i * 3 + 1];

image[j][i].blue = pointer[j * width * 3 + i * 3 + 2];

}

}

return image;

}

I did dozens of experiments, but it seems I am overlooking something in the conversion process.

Any help would be appreciated.

Thanks