I know we can write loss.backward() to finish grad calculation.But when I read source code about backward,I am confused.

I add some print information in pytorch python source code to trace the backward process.

Basically loss.backward() will call torch.autograd.init.py backward function and get into Variable._execution_engine.run_backward function.This is a cpp function.When we use DataParallel,the source code finally will call torch.nn.parallel.Gather.backward function and torch.nn.parallel.Broadcast.backward function.How are these two class called?

In addition,Gather.backward funtion will call Scatter.apply function, and Scatter.apply function will call Scatter.forward function.How does it woks?

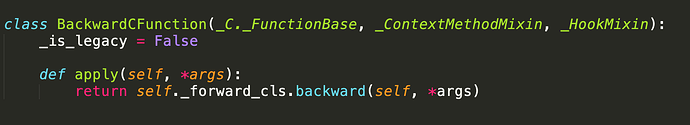

The Scatter class inherits from torch.autograd.Function class.Function class inherits from a python metaclass.The metaclass bulid a class that the class inherits from BackwardCFunction class.

So in my opinion,if we call Scatter.apply function,the Scatter.backward function will be called.But why not?

Because there is a static apply function that is called when you do Scatter.apply() and a non-static one that is called on an instance of scatter: Scatter().apply().

Is the static apply function from _C._FunctionBase class?

I mean that it is from THPFunction_apply?Thanks for your reply!

Interesting!And I have a final question.Could you tell me where does the non static apply function called?

Sure.

In the engine, when a new task can be executed here.

It first does some checking and input/output preparation here.

It then does all the required hooks, the use the operator() function on the actual Node (previously called Function) here.

This is routed to the main Node class here.

Which calls the apply function of Node, which is a PyNode in your case as it is implemented in python.

And the PyNode gets and calls the .apply attribute from the class instance here.

Looking at it this way, there are a few levels of indirection indeed

I understood!Thanks a lot!