Can someone give me some advice to implement deeply supervised network, which has multiple loss? Thank you.

I don’t understand what you are confused about. Don’t you just take a (weighted) sum of the different losses?

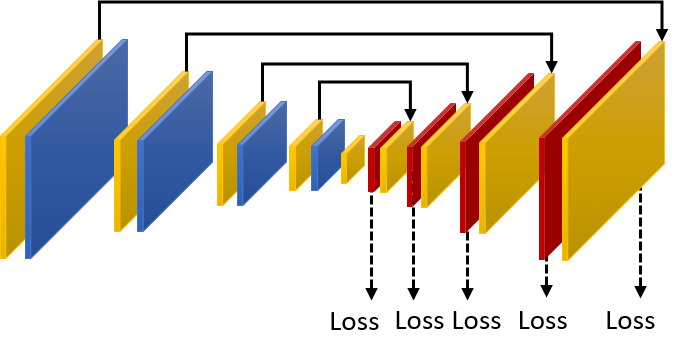

I want to implement the network like this. But I don’t how to implement multiple outputs and losses. I am just a newbie in PyTorch.

If you look at a basic PyTorch tutorial like http://pytorch.org/tutorials/beginner/blitz/cifar10_tutorial.html, you will see code like this:

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

But there is nothing stopping you from having forward return outputs from several layers instead of just the last layer, and then you can use those other outputs to compute losses just like in the tutorial you use the last output to compute a loss. And you can add the losses together to get your overall loss.

3 Likes