That should work. Can you post the entire code, just to check if there is some error there and maybe trying to run it here?

Here is the full code:

http://pastebin.com/g6xxBDmr

It is original ImageNet example with some elements of Visdom, which I tried to use. So running it on your own should stat Visdom server or delete some lines.

Hello,

Do you know how can I change the cost function while finetuning a pre-trained model (like ResNet-18, VGG-16) or create a customized cost function and use it?

Thanks.

the cost function lies outside the model definition, change it like you would change the cost function as usual.

What is the learning rate for base_params and fc.parameters() in this example How to perform finetuning in Pytorch?? @apaszke

@apaszke Do you know if the underlying code has changed at all since your posted this? I get

AttributeError: type object 'object' has no attribute '__getattr__'

when trying

optimizer = torch.optim.SGD(model.fc.parameters(), opt.lr, momentum=opt.momentum, weight_decay=opt.weight_decay)

@achaiah Are you using master ?

There was a bad commit I think during last week, I got AttributeError for previously working examples and had to rollback. It was fixed in the past couple days it seems. Try to update.

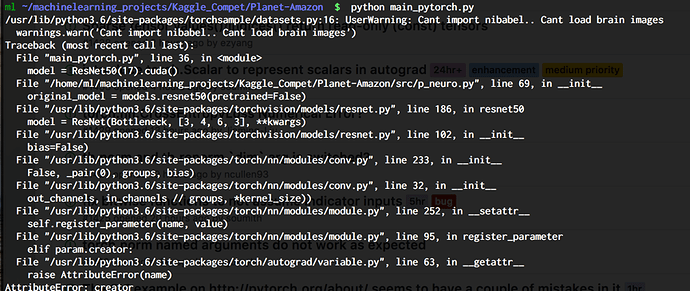

My error for reference (ignore torchsample warning it’s always there):

Aha, you’re correct. I updated to the latest version from pytorch.org and the error has gone away.

Can you out the whole code for finetuning here, so we can get the benefit from it please?

For future readers of this thread: there’s a tutorial on transfer learning in official pytorch tutorials.

for someone like me that is a newbie, that tutorial is confusing and not helpful…

Let me know if you face any problem.

How to pause and resume training in Pytorch?, suppose I have train until epoch 2000, and I want to continue until epoch 4000. I just think that first we load the weight and finetune it, but my loss is always same. Is it need to seet require_grad=true to all parameter to resume training? How to do resume training right way in pytorch?

imagenet example in pytorch/examples resumes by checkpointing optimiser etc along with the model. Check it out.

Can I perform finetuning of vgg face architecture using pytorch on my data?

Here’s a tutorial based on Resnet 18!

I have trained a model that not in the model zool from scratch, now i want to finetune it on new datasets, anyone know how to do it?

Answered your question on the github issue you created on my tutorial! Hope it helps!

@apaszke i have some question that hope you can give some advice:

-

How can i add new layers after the pretrained model and freeze the pretrained model and only train the newly added layer?

-

Or how i can finetune the newly build network as a whole? thanks~

-

Can i replace the pretrained model’s last several layers with other newly build layer and in this way is the pretrained model can be used?

thanks~

Isn’t ‘pretrained=true’ for finetuning in imagenet/main.py?