https://pytorch.org/tutorials/intermediate/char_rnn_classification_tutorial

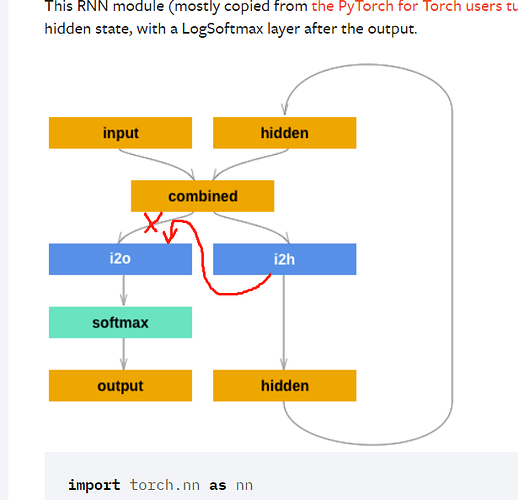

In this link, an RNN network is specified, and I think there is something wrong with its structure. This existing structure cannot collect hidden layer information for the last character. So I think the network should be modified to the structure shown below.

The modified network is shown below:

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.hidden_size = hidden_size

self.i2h = nn.Linear(input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(hidden_size, output_size)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, input, hidden):

combined = torch.cat((input, hidden), 1)

hidden = F.relu(self.i2h(combined))

output = self.i2o(hidden)

output = self.softmax(output)

return output, hidden