Hello,

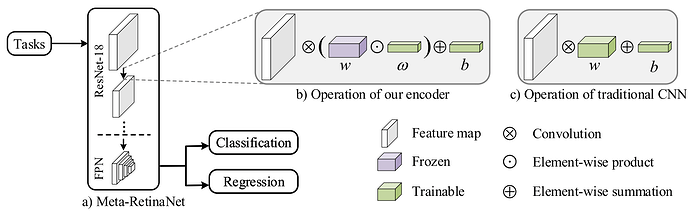

I am currently attempting to implement the “Meta Coefficient Learner” from the Meta-RetinaNet paper. However, I am currently stuck while implementing the trainable coefficient vector (see Figure below) into this example. So essentially I want to freeze the pretrained ResNet and apply the trainable coefficient layer such that I can then “Meta”-train the network on a new dataset.

So my question would be: How can I add the new coefficient vector to all convolutional weights?

My guess would be to add the [1x1xFilters] Vector as a convolution before each of the original convolutions. Such that I can freeze the original layer, while keeping the new layers trainable.

Thanks!