Hi Guys,

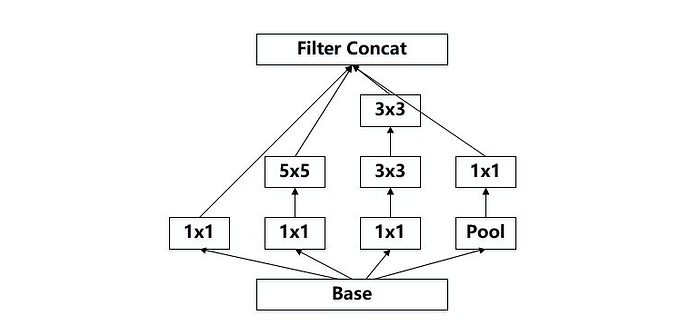

I need to create Inception network in pytorch for one of my project from scratch so I am digging some tutorials most of the tutorials advice to use pre trained weight. But with some research I build small block of inception model on MNIST dataset.

Just need to know is it correct or not.

**Input size 128,1,28,28 **

(128 is batch size)

class InceptionA(nn.Module):

def __init__(self):

super().__init__()

##Branch 1

self.conv1x1 = nn.Conv2d(in_channels=1,out_channels= 32 , kernel_size = 1)

##Branch 2

self.conv1x1_1 = nn.Conv2d(in_channels=1,out_channels= 32 , kernel_size = 1)

self.conv5x5 = nn.Conv2d(in_channels=32,out_channels= 64 , kernel_size = 5,padding =2)

##Branch 3

self.conv1x1_2 = nn.Conv2d(in_channels=1,out_channels= 64 , kernel_size = 1)

self.conv3x3 = nn.Conv2d(in_channels=64,out_channels= 128 , kernel_size = 3)

self.conv3x3_1 = nn.Conv2d(in_channels=128,out_channels= 196 , kernel_size = 3,padding=2)

##Branch 4

self.pooling = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

self.conv1x1_3 = nn.Conv2d(in_channels=1,out_channels= 64 , kernel_size = 1 ,padding = 0)

## Linear Layer

self.fc1 = nn.Linear(356*28*28,32)

self.fc2 = nn.Linear(32,10)

def forward(self,x):

## 1st branch

conv1x1 = torch.relu(self.conv1x1(x))

## 2nd Branch

conv1x1_1 = torch.relu(self.conv1x1_1(x))

conv5x5 = torch.relu(self.conv5x5(conv1x1_1))

## 3rd Branch

conv1x1_2 = torch.relu(self.conv1x1_2(x))

conv3x3 = torch.relu(self.conv3x3(conv1x1_2))

conv3x3_1 = torch.relu(self.conv3x3_1(conv3x3))

## 4th Branch

pooling = torch.relu(self.pooling(x))

conv1x1_3 = torch.relu(self.conv1x1_3(pooling))

output = [conv1x1,conv5x5,conv3x3_1,conv1x1_3]

out = torch.cat(output,1)

out = out.view(-1,356*28*28)

x = self.fc1(out)

x = self.fc2(x)

return x

Please let me know if anyone need more info.

Stay safe.!!!