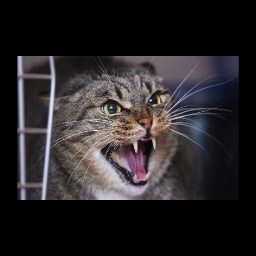

I am trying to learn a piecewise affine transform model where the input images are converted via the transform into output data. One example transformation would be (first to second) –

The input images, are however, neither simple down samples with borders, nor distortion free rectangles, which is why I need piecewise affine transform to work.

I use the following code to “learn” the transform but obviously i am going wrong somewhere as the loss is stuck from the very first epoch( i use MSE loss)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# Spatial transformer localization-network

self.localization = nn.Sequential(

nn.Conv2d(3, 8, kernel_size=7),

nn.MaxPool2d(2, stride=2),

nn.ReLU(True),

nn.Conv2d(8, 10, kernel_size=5),

nn.MaxPool2d(2, stride=2),

nn.MaxPool2d(2, stride=2),

nn.ReLU(True)

)

# Regressor for the 3 * 2 affine matrix

self.fc_loc = nn.Sequential(

nn.Linear(10 * 30 * 30, 360),

nn.ReLU(True),

nn.Linear(360, 3 * 2)

)

# Initialize the weights/bias with identity transformation

self.fc_loc[2].weight.data.zero_()

# Spatial transformer network forward function

def stn(self, x):

xs = self.localization(x)

xs = xs.view(-1, 30* 10 * 30)

theta = self.fc_loc(xs)

theta = theta.view(-1, 2, 3)

grid = F.affine_grid(theta, x.size())

print(x.shape)

x = F.grid_sample(x, grid)

return x

def forward(self, x):

# transform the input

x = self.stn(x)

Code is heavily borrowed for the pytorch STN example as I first wanted to try out things before stepping it up.

Any help would be highly appreciated ![]()

Edit:

This is the output from the model –

The loss is stuck as the output is same, no matter what the input ![]()

Are there any constraints for defining the grid ? Something that maybe I can teach the model–which it would take a long time to learn by itself.