Hi guys,

so I have been trying to get into reinforcement learning and have taken to a Udemy course, which has worked perfectly fine, until it came to an implementation of a deep Q learning algorithm.

The issue I am having is that my model works fine on the CPU (only issue it being significantly slower), with an expected learning curve. The agent seems to learn how to play pong after 220 games and comes to an average score of 16 (over the last 100 games) after 500 games.

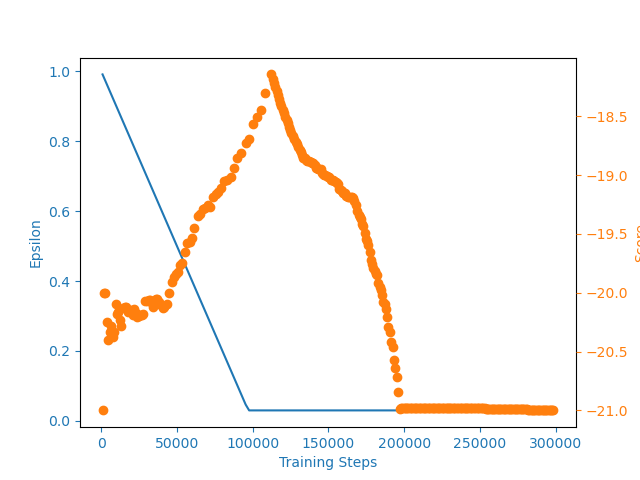

If I try to run it on my GPU (GTX980M) though to improve performance, the learning curve seems to fall off a cliff at some point, seemingly reaching a maximum of -18 points on average and then dropping back to -21. The learning curve for the runs on the GPU looks as follows:

I have since reversed to simply cloning the repository from the course ( Deep-Q-Learning-Paper-To-Code/DQN at master · philtabor/Deep-Q-Learning-Paper-To-Code · GitHub ), but the issue persists and I have not yet found out why. I have reinstalled PyTorch and Cuda recently without success (Python 3.6.8, Torch 1.5.0, Cuda 10.1)

Any ideas as to why I am getting such an odd behaviour?

Any input would be greatly appreciated.

Thanks in advance,

Alex