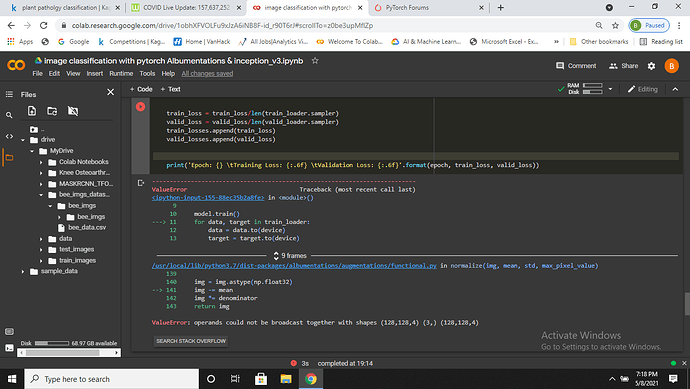

some of the images are having different size of channels how to resize the channel and have a common channel

You could either write a custom transformation or use transforms.Lambda to apply your operation, which would yield the desired number of channels. Here is a simple example, which slices the channels:

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Lambda(lambda x: x[:3] if x.size(0)>3 else x),

transforms.Normalize(mean=[0.5, 0.5, 0.5], std=[0.5, 0.5, 0.5])

])

img = transforms.ToPILImage()(torch.randn(3, 24, 24))

out = transform(img)

print(out.shape)

> torch.Size([3, 24, 24])

img = transforms.ToPILImage()(torch.randn(4, 24, 24))

out = transform(img)

print(out.shape)

> torch.Size([3, 24, 24])

1 Like