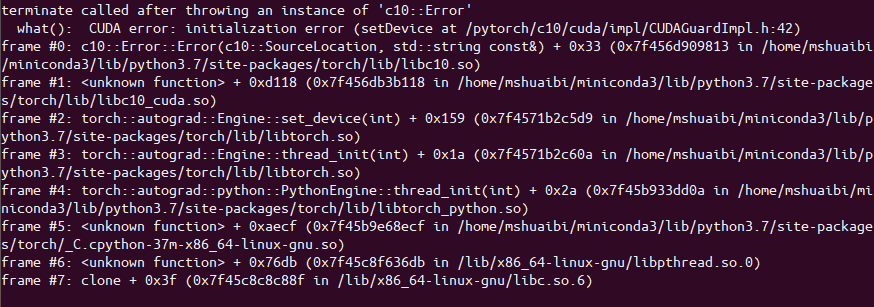

I’m trying to train multiple models (ensembling) in an online framework - such that if a data point arrives that my model is uncertain about, I retrain the models (taking the average) and proceeding on. However, after the first round of training my models, when I come to retrain them I get a terminate called after throwing an instance of 'c10::Error'.

The error looks something like this:

I’m training my models in parallel with something like this:

def train(training_ensembles):

# parallelize each model on it's own cpu

pool = multiprocessing.Pool(len(training_ensembles))

results = pool.map(train_model, training_ensembles)

return results

When I train the models in series I run into no issues. Something about the way I’m parallelizing these is causing issues with PyTorch. Any help is appreciated - Thanks!