import torch

import torch.nn as nn

import time

import numpy as np

import os

from Cifar10 import dataloader

import torchvision.models as models

os.environ['CUDA_VISIBLE_DEVICES'] = '2'

test_loader = dataloader.get_test_loader('../../../../data/cifar10/',

batch_size=125,

)

device = 'cuda'

vgg16 = models.vgg16(pretrained=True).to(device)

criterion = nn.CrossEntropyLoss().cuda()

def test():

vgg16.eval()

test_loss = 0

correct = 0

total = 0

global total_acc_test_max

acc_test_max = []

with torch.no_grad():

for batch_idx, (images, labels) in enumerate(test_loader):

images, labels = images.to(device), labels.to(device)

outputs = vgg16(images)

loss = criterion(outputs, labels)

test_loss += loss.data

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += predicted.eq(labels.data).cpu().sum()

if batch_idx % 10 == 9:

acc_test_max.append(100. * correct.item() / total)

total_acc_test_max.append(100. * correct.item() / total)

print('[TEST] : Loss: ({:.4f}) | Acc: ({:.2f}%) ({}/{})'

.format(test_loss / (batch_idx + 1), 100. * correct.item() / total, correct, total))

print('*************** Val Mean Accuracy : ({:.2f}%) ***************'.format(np.mean(acc_test_max)))

print('************ Total Val Mean Accuracy : ({:.2f}%) ************'.format(np.mean(total_acc_test_max)))

print()

start_time = time.time()

total_acc_test_max = []

for epoch in range(0, 5):

print("*************** {} ***************".format(epoch + 1))

test()

print("--- {:.0f} minutes {:.2f} seconds ---".format((time.time() - start_time) // 60, (time.time() - start_time) % 60))

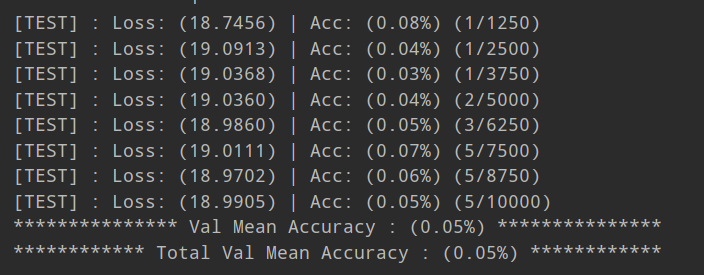

I load vgg16 pretrained weight, and test it.

But the accuracy is so low.

What is the problem?