How can I prevent overfitting when the dataset is not to large. My dataset consists of 5 classes with a total dataset size about 15k images.

I have tried data augmentation but doesn’t help too much.

class Net(nn.Module):

def __init__(self, num_channels):

super(Net, self).__init__()

self.num_channels = num_channels

self.conv1 = nn.Conv2d(in_channels=1,

out_channels=self.num_channels,

kernel_size=3,

stride=2,

padding=0)

self.bn1 = nn.BatchNorm2d(self.num_channels)

self.conv2 = nn.Conv2d(in_channels=self.num_channels,

out_channels=self.num_channels * 2,

kernel_size=3,

stride=1,

padding=0)

self.bn2 = nn.BatchNorm2d(self.num_channels * 2)

self.conv3 = nn.Conv2d(in_channels=self.num_channels * 2,

out_channels=self.num_channels * 4,

kernel_size=3,

stride=1,

padding=0)

self.bn3 = nn.BatchNorm2d(self.num_channels * 4)

self.fc1 = nn.Linear(self.num_channels * 4 * 6 * 6, self.num_channels * 4)

self.fcbn1 = nn.BatchNorm1d(self.num_channels * 4)

self.fc2 = nn.Linear(self.num_channels * 4, 5)

def forward(self, x):

x = self.bn1(self.conv1(x)) # Salida: self.num_channels x 128 x 128

x = F.relu(F.max_pool2d(x, 2)) # salida: num_channels x 63 x 63

x = self.bn2(self.conv2(x)) # Salida: self.num_channels*2 x 31 x 31

x = F.relu(F.max_pool2d(x, 2)) # salida: num_channels*2 x 29 x 29

x = self.bn3(self.conv3(x)) # Salida: self.num_channels*4 x 14 x 14

x = F.relu(F.max_pool2d(x, 2)) # salida: num_channels*4 x 12 x 12

# Flatten

x = x.view(-1, self.num_channels * 4 * 6 * 6)

# fc

x = F.relu(self.fcbn1(self.fc1(x)))

x = F.dropout(x, p=0.5, training=True)

x = self.fc2(x)

return x

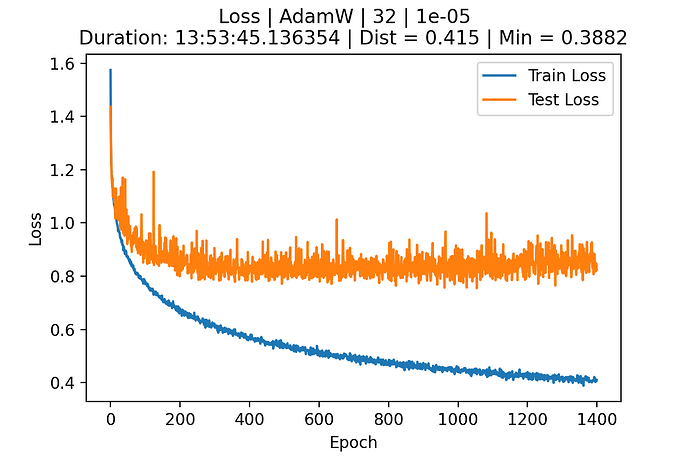

Here is the loss