I am using the code:

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2);

dataiterator = iter(trainloader)

However once the program reaches the line:

dataiterator = iter(trainloader)

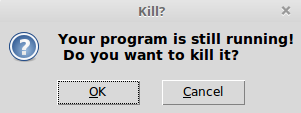

It crashes and asks me twice whether I want to kill the python program(Like I pressed the close window button). The program is then unable to continue and hangs, this problem still persists after changing the:

iter()

to a for loop:

for image, label in trainloader

It always crashes the program. Additionally every time it asks to close it leaves a python3 program running in the background and they each use about 350MB. I am using linux mint 18.1 with a lenovo thinkpad x230. Has anybody come across this error before?