Hello!

I am a newbie to deep learning world.

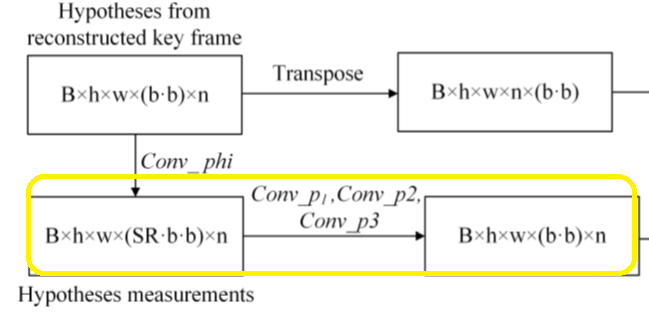

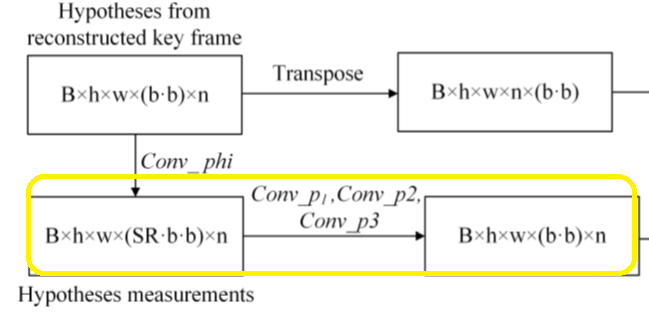

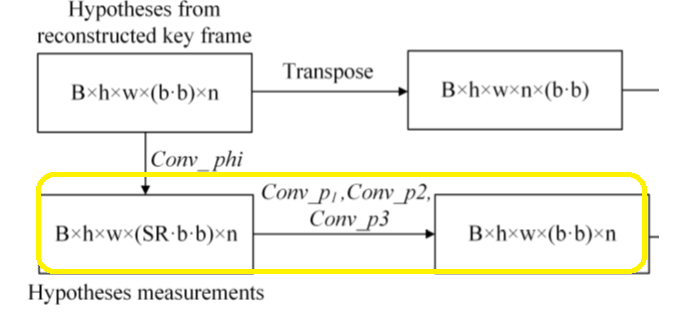

I don’t know haw to code Conv p1,p2,p3.

SR is lower than one.

would you mind helping me code this?

Hello!

I am a newbie to deep learning world.

I don’t know haw to code Conv p1,p2,p3.

SR is lower than one.

would you mind helping me code this?

Which dimension does (SR*b*b) describe?

If it’s the channel dimension, you could create a conv layer with in_channels=SR*b*b and out_channels=b*b.

However, based on the provided dimensions I’m a bit confused what type of input you are dealing with.

Could you describe your use case a bit?

hi!

Thanks for your reply.

Suppose I have a image. At first I should extract patches. then flatten each patch and then take samples. (SR is my sampling rate) now I should find a conv layer that reconstructs my sampled data.

now I don’t know how to code this part ![]()

I should use conv3? conv2d?

I cant code it ![]()

I’m still unsure what n stands for, and the highlighted part doesn’t seem to be drawn in the figure.

However, since SR*b*b represent the channels in the output of Conv_phi, I assume b*b represent the channel dimension of the output of Conv_px.

If that’s the case, nn.Conv2d(SR*b*b, b*b*, kernel_size, stride, padding) should work.

Thanks for your reply.

n stands for the number of hypotheses. Suppose a window that our patch is in center of it. There are n patches inside the window. In other words I have a key frame for each image and I should extract n contiguous patches of the patch in key frame inside the window.

anyway…

I wrote your code but i got an error.

code:

import torch

import numpy as np

import torch.nn as nn

B,h,w,Sr,b,n=16,32,56,0.1,8,25

kernel_size=(1,1,int(np.ceil(0.1*b*b)))

x=torch.rand(B,h,w,int(np.ceil(0.1*b*b)),n)

conv=nn.Conv2d(int(np.ceil(0.1*b*b)),b*b,kernel_size)

conv(x)

B: batch size, h=height, w=width , SR: sample rate, b= block size.

My kernel size should be: (1,1,SR* b* b).

out channel=(b*b)

Would you please help me solve this error?

RuntimeError: expected stride to be a single integer value or a list of 3 values to match the convolution dimensions, but got stride=[1, 1]

If n is therefore supposed to be a the “depth” on the input, you would have to create the input as [batch_size, channels, depth, height, width]:

x=torch.rand(B,int(np.ceil(0.1*b*b)),n,h,w,)

conv=nn.Conv3d(int(np.ceil(0.1*b*b)),b*b,kernel_size)

and use a 3D convolution.

Thanks for your reply. You solved my problem but still there is a little problem:

import torch

import numpy as np

import torch.nn as nn

B,h,w,Sr,b,n=16,32,56,0.1,8,25

kernel_size=(1,1,int(np.ceil(0.1*b*b)))

x=torch.rand(B,int(np.ceil(0.1*b*b)),n,h,w)

print(x.shape)

conv=nn.Conv3d(int(np.ceil(0.1*b*b)),b*b,kernel_size)

conv(x).shape

the output was:

torch.Size([16, 7, 25, 32, 56])

torch.Size([16, 64, 25, 32, 50])

Why 56 is changed to 50?

would you mind explain it or introduce me a source that explains this kind of reshaping stuff ?

I had another problem,too.

#conv_phi

import torch

import numpy as np

import torch.nn as nn

B,h,w,Sr,b,n=16,32,56,0.1,8,25

kernel_size=(1,1,b*b)

x=torch.rand(B,b*b,h,w)

print(x.shape)

conv=nn.Conv3d(b*b,int(np.ceil(0.1*b*b)),kernel_size)

print(conv)

conv(x).shapepe or paste code here

error:

Expected 5-dimensional input for 5-dimensional weight 7 64 1 1 64, but got 4-dimensional input of size [16, 64, 32, 56] instead

I will very thankful if anybody helps me solve this error and explains how to encounter with such errors.

You are using a kernel size of (1, 1, b*b), so the width dimension will be reduced since you are not using any padding.

The last error is raised, because based on the information you’ve provided, I assumed that n is the depth dimension, which expects a 5D input tensor as given in my previous post.

Thanks for your help.