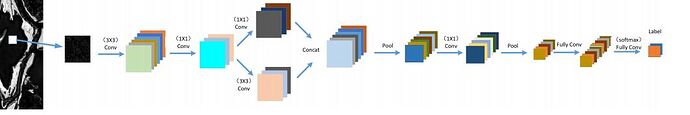

I tested this code in order to make this architecture

class Net(nn.Module):

def __init__(self):

super(Net,self).__init__()

self.cnn1 = nn.Conv2d(in_channels=1, out_channels=8, kernel_size=3,stride=1, padding=1)

self.batchnorm1 = nn.BatchNorm2d(8)

self.relu = nn.ReLU()

self.maxpool1 = nn.MaxPool2d(kernel_size=2)

self.cnn2 = nn.Conv2d(in_channels=8,out_channels=10, kernel_size=5, stride=1, padding=1)

self.batchnorm2 = nn.BatchNorm2d(10)

self.relu = nn.ReLU()

self.maxpool2 = nn.MaxPool2d(kernel_size=2)

self.cnn3 = nn.Conv2d(in_channels=18, out_channels=32, kernel_size=5, stride=1, padding=1)

self.batchnorm3 = nn.BatchNorm2d(32)

self.relu = nn.ReLU()

self.fc1 = nn.Linear(in_features=32, out_features=20)

self.fc2 = nn.Linear(in_features=20, out_features=10)

self.fc3 = nn.Linear(in_features=10, out_features=2)

def forward(self,x):

out = self.cnn1(x)

out = self.batchnorm1(out)

out = self.relu(out)

out = self.maxpool1(out)

print(out.size())

out1 = self.cnn2(out)

out1 = self.batchnorm2(out1)

out1 = self.relu(out1)

out1= self.maxpool2(out1)

print(out1.size())

out2 = torch.cat((out,out1), dim=2)

out = self.cnn3(out2)

out = self.batchnorm3(out)

out = self.relu(out)

out = out.view(out.size(0),-1)

out = self.fc1(out)

out = self.relu(out)

out = self.fc2(out)

out = self.relu(out)

out = self.fc3(out)

out = self.relu(out)

return out

this problem is displayed

RuntimeError: Calculated padded input size per channel: (4 x 4). Kernel size: (5 x 5). Kernel size can't be greater than actual input size