Dear all,

I got a question regarding the train and validation loss.

What is the normal practice to check?

- loss per epoch?

- loss per batch?

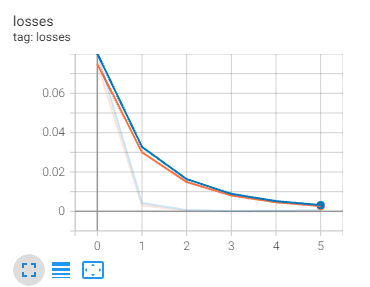

blue refers to train loss per epoch

orange refers to validation loss per epoch

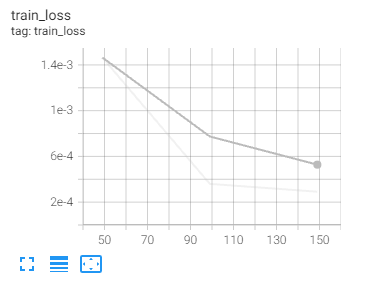

training loss per batch

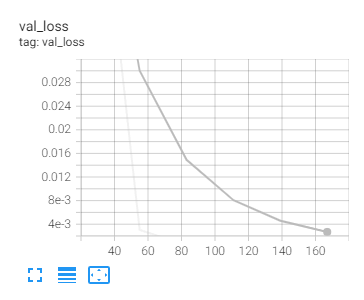

validation loss per epoch

If we check on loss per epoch, it is a good fit.

But if we compare the loss per batch, it is overfit since val loss higher than train loss.

Anyone can please explain which should we observe in normal practice. loss per epoch or per batch?

Thanks