Dear all,

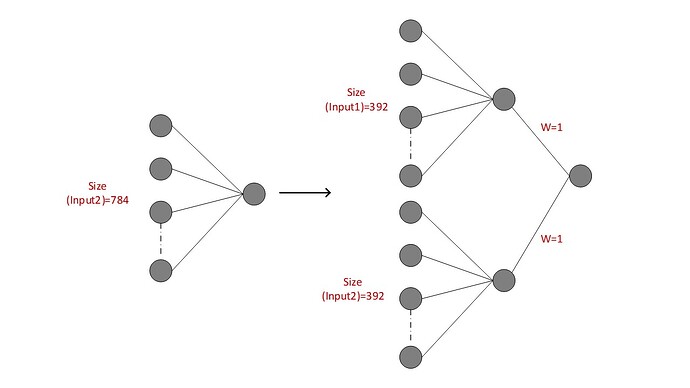

I want to reconstruct a synapse in the first layer of full-connection, which shows in Fig. Could you help me.

If I understand your use case correctly, you would like to transform the left layer to the right one.

In that case I assume the left layer is defined as nn.Linear(784, 1, bias=False) and you could replace it with:

class MyModel(nn.Module):

def __init__(self):

super(MyModel, self).__init__()

self.fc1a = nn.Linear(392, 1, bias=False)

self.fc1b = nn.Linear(392, 1, bias=False)

self.fc2 = nn.Linear(2, 1, bias=False)

def forward(self, xa, xb):

xa = self.fc1a(xa)

xb = self.fc1b(xb)

x = torch.cat((xa, xb), dim=1)

x = self.fc2(x)

return x

model = MyModel()

batch_size = 2

xa = torch.randn(batch_size, 392)

xb = torch.randn(batch_size, 392)

out = model(xa, xb)

Note that I haven’t added any activation functions etc. as it’s unclear from the figure, if this is desired.

1 Like