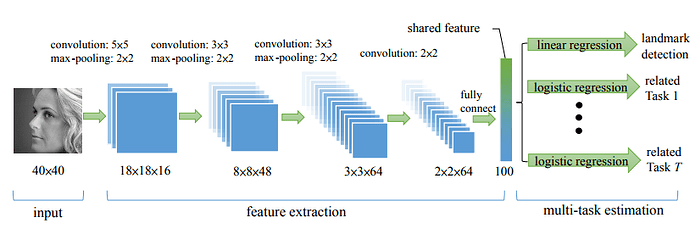

Hello I am Trying to implement small CNN for Facial Landmark detection which comes from this tutorial : Tutorial . I am using this paper : Paper , and the architecture be like this one.

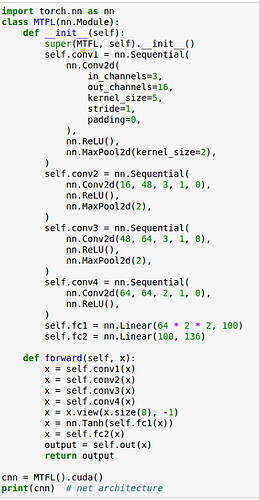

Here is my network code.

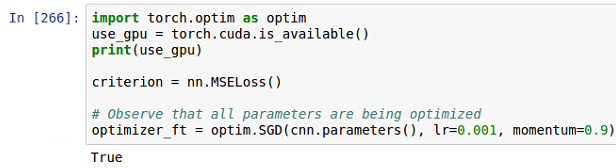

Here is my training code.

import math img_size = 40 big_loss = math.sqrt(pow(img_size,2)+pow(img_size,2)) def train_model(model, criterion, optimizer, lr_scheduler, num_epochs=25): since = time.time()

best_model = model best_acc = 0.0

for epoch in range(num_epochs): print('Epoch {}/{}'.format(epoch, num_epochs - 1)) print('-' * 10)

# Each epoch has a training and validation phase for phase in ['train', 'val']: if phase == 'train': optimizer = lr_scheduler(optimizer, epoch) model.train(True) # Set model to training mode else: model.train(False) # Set model to evaluate mode

running_loss = 0.0 running_corrects = 0

# Iterate over data. for data in dset_loaders[phase]: # get the inputs #inputs, labels = data for i_batch, sample_batched in enumerate(dset_loaders['train']): inputs = sample_batched['image'] labels = sample_batched['landmarks'] print(inputs.size()) print(labels.size()) #print(labels) #inputs, labels = Variable(inputs.cuda()), Variable(labels.cuda()) # wrap them in Variable if use_gpu: inputs, labels = Variable(inputs.cuda()), Variable(labels.cuda()) else: inputs, labels = Variable(inputs), Variable(labels)

# zero the parameter gradients optimizer.zero_grad()

# forward outputs = model(inputs) #_, preds = torch.max(outputs.data, 136) loss = criterion(outputs, labels)

# backward + optimize only if in training phase if phase == 'train': loss.backward() optimizer.step()

# statistics running_loss += loss.data[0] running_corrects += torch.sum(big_loss-loss.data[0])

epoch_loss = running_loss / dset_sizes[phase] epoch_acc = running_corrects / dset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format( phase, epoch_loss, epoch_acc))

# deep copy the model if phase == 'val' and epoch_acc > best_acc: best_acc = epoch_acc best_model = copy.deepcopy(model)

print()

time_elapsed = time.time() - since print('Training complete in {:.0f}m {:.0f}s'.format( time_elapsed // 60, time_elapsed % 60)) print('Best val Acc: {:4f}'.format(best_acc)) return best_model

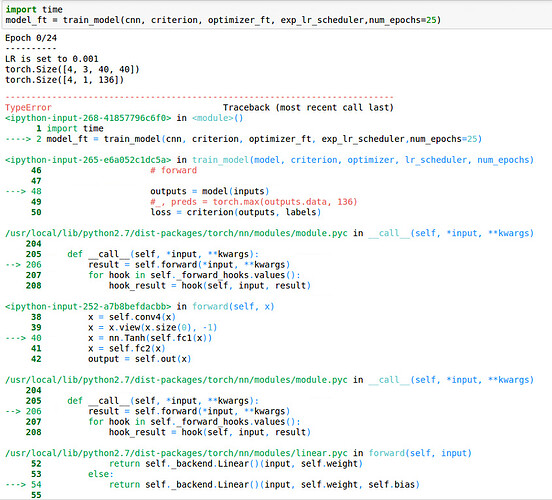

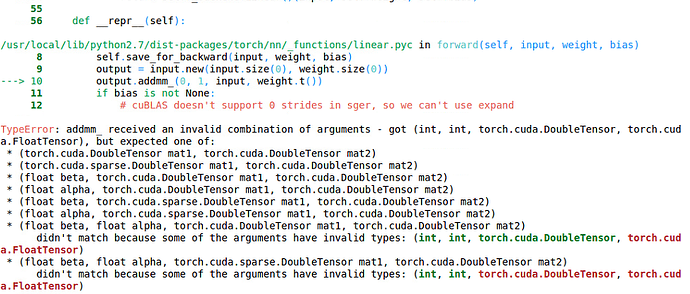

However I got this error in the input.

I am searching with the lastest post, and make sure everything in cuda. However the error still exist.

Discussion

Anyone can help me in this error?

-Thank you-