I’m a high schooler who’s (very!) new to machine learning and PyTorch, so I’ve been trying to build a DCGAN following the PyTorch official tutorial to replicate samples of melanoma lesions from the SIIM-ISIC Melanoma Classification dataset. However, I keep getting this error when trying to compute my discriminator loss on real data:

Using a target size (torch.Size([64])) that is different to the input size (torch.Size([14400])) is deprecated. Please ensure they have the same size.

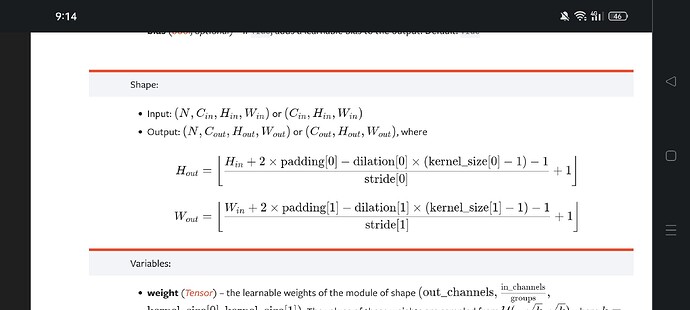

Turns out my ‘output’ shape is 14400, but I’m not sure how it got there, although I’d guess it’s an issue from the way I load data or the layers in my discriminator. I’ve tried looking at some of the other related posts on here, but I’m not quite certain that those match my situation. I’m also inclined to believe that the issue is more likely with the way I’m loading data, as the main generator/discriminator/train code I followed from the aforementioned PyTorch DCGAN tutorial. I was wondering if I could get a few pointers in the right direction? Anything would help! Also, if there’s anything I should amend or add to this post, apologies in advance, please let me know.

I’m using a batch size of 64, a BCELoss function, and I’m not sure if this would help, but here is my train_transform:

train_transform = transforms.Compose([

transforms.Grayscale(num_output_channels=3),

transforms.Resize((299, 299)),

transforms.RandomHorizontalFlip(),

transforms.RandomVerticalFlip(),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

Here’s a row of the csv file I use (each ISIC_number corresponds to a jpg image with the same number), with the columns (image_name, patient_id, sex, age_approx, anatom_site_general_challenge, diagnosis, benign_malignant, target):

ISIC_0149568 IP_0962375 female 55.0 torso melanoma malignant 1

I’m also using this to create, initialize, and load my dataset:

class PreGANDataset(torch.utils.data.Dataset):

def __init__(self, csv_file_path, root_path, transform=None):

self.annotations = pd.read_csv(csv_file_path)

self.root_path = root_path

self.transform = transform

def __len__(self):

return len(self.annotations)

def __getitem__(self, index):

img_path = os.path.join(self.root_path, self.annotations.iloc[index, 0] + ".jpg")

img = Image.open(img_path)

y_label = torch.tensor(int(self.annotations.iloc[index, 7]))

if self.transform:

img = self.transform(img)

return img, y_label

train_dataset = PreGANDataset(csv_file_path="/content/malignant.csv", root_path="/content/train_and_test_folder/train",

transform=train_transform)

train_dataloader = DataLoader(train_dataset, batch_size=BATCH_SIZE, shuffle=True)

Here is my discriminator and train function code:

class Discriminator(nn.Module):

def __init__(self, NUM_GPU):

super(Discriminator, self).__init__()

self.NUM_GPU = NUM_GPU

self.main = nn.Sequential(

nn.Conv2d(3, 64, 4, 2, 1, bias=False),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(64, 64 * 2, 4, 2, 1, bias=False),

nn.BatchNorm2d(64 * 2),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(64 * 2, 64 * 4, 4, 2, 1, bias=False),

nn.BatchNorm2d(64 * 4),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(64 * 4, 64 * 8, 4, 2, 1, bias=False),

nn.BatchNorm2d(64 * 8),

nn.LeakyReLU(0.2, inplace=True),

nn.Conv2d(64 * 8, 1, 4, 1, 0, bias=False),

nn.Sigmoid()

)

def forward(self, input):

return self.main(input)

DISCRIM = Discriminator(NUM_GPU).to(DEVICE)

if (DEVICE.type == 'cuda') and (NUM_GPU > 1):

DISCRIM = nn.DataParallel(DISCRIM, list(range(NUM_GPU)))

DISCRIM.apply(weights_initialization)

print(DISCRIM)

FIXED_NOISE = torch.randn(64, Z_SIZE, 1, 1, device=DEVICE)

GEN_OPTIMIZER = torch.optim.Adam(

MODEL.parameters(), lr=LEARNING_RATE, betas=(BETA1, 0.999))

DIS_OPTIMIZER = torch.optim.Adam(

DISCRIM.parameters(), lr=LEARNING_RATE, betas=(BETA1, 0.999))

def train():

img_list = []

G_losses = []

D_losses = []

iters = 0

for epoch in range(NUM_EPOCHS):

for i, data in enumerate(train_dataloader, 0):

DISCRIM.zero_grad()

rg = data[0].to(DEVICE)

bs = rg.size(0)

label = torch.full((bs,), REAL_LABEL,

dtype=torch.float, device=DEVICE) #REAL_LABEL is set to 1

output = DISCRIM(rg).view(-1)

dis_cost_real = LOSS_FUNCTION(output, label) # Right here is where this error is raised, my guess is that I'm using the wrong shape for output, but I'm not sure how (since the number referenced in the error in 14400)

dis_cost_real.backward()

dx = output.mean().item()

noise = torch.randn(bs, Z_SIZE, 1, 1, device=DEVICE)

fake = MODEL(noise)

label.fill_(FAKE_LABEL) #FAKE_LABEL is set to 0

output = DISCRIM(fake.detach()).view(-1)

dis_cost_fake = LOSS_FUNCTION(output, label)

dis_cost_fake.backward()

dgz = output.mean().item()

dis_cost = dis_cost_fake + dis_cost_real

DIS_OPTIMIZER.step()

MODEL.zero_grad()

label.fill_(REAL_LABEL)

output = DISCRIM(fake).view(-1)

gen_cost = LOSS_FUNCTION(output, label)

gen_cost.backward()

dgz2 = output.mean().item()

GEN_OPTIMIZER.step()

if i % 50 == 0:

print('[%d/%d][%d/%d]\tLoss_D: %.4f\tLoss_G: %.4f\tD(x): %.4f\tD(G(z)): %.4f / %.4f'

% (epoch, NUM_EPOCHS, i, len(train_dataloader),

dis_cost.item(), gen_cost.item(), dx, dgz, dgz2))

G_losses.append(gen_cost.item())

D_losses.append(dis_cost.item())

if (iters % 500 == 0) or ((epoch == NUM_EPOCHS-1) and (i == len(train_dataloader)-1)):

with torch.no_grad():

fake = MODEL(FIXED_NOISE).detach().to(DEVICE)

img_list.append(torchvision.utils.make_grid(

fake, padding=2, normalize=True))

iters += 1

train()