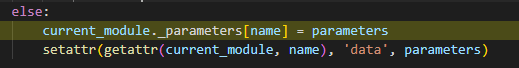

I try to assign the parameters to the model, and there are two options, which line I should use? The first line or the second line, and what is the difference between them? Also, If I use the first line, it will cause my DDP stuck at the second epoch, is there any solution If I have to use the first line?

Assigning/updating parameters (line 1) to the model during DDP will cause a hang as the original parameter has been already assigned to the Model parameters() and updating this removes the reference to it and the gradients.

(line 2) data is an internal field in tensors that just changes the value of it. It does not update the Model parameters() and will not cause a hang.

Is there any reason you are updating the parameters manually like this during training? It is not very conventional. If you need to load previous state you should look into torch.load/torch.save (torch.load — PyTorch 1.11.0 documentation)