What I don’t understand is how can I get the same thing as np.random.multinomial(20, [1/6.]*6, size=1) using torch.multinomial.

If I try

>>> weights = torch.tensor([1/6.]*6, dtype=torch.float)

>>> torch.multinomial(weights, 6)

tensor([4, 2, 1, 0, 5, 3])

It has a different interpretation than the numpy’s result (see my dice example): the pytorch returns shuffled indices (?) which doesn’t make much sense to me.

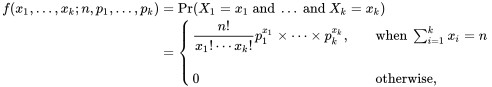

Also, I would like to understand how the weights w1, w2, … (e.g. weights [0, 10, 3, 0]) translate to probabilities p1, p2, … in multinomial distribution: