My goal is to train anomalib model (CFlow) anomalib/anomalib at main · openvinotoolkit/anomalib (github.com). The problem is that it took a very long time just to pass 1 epoch.

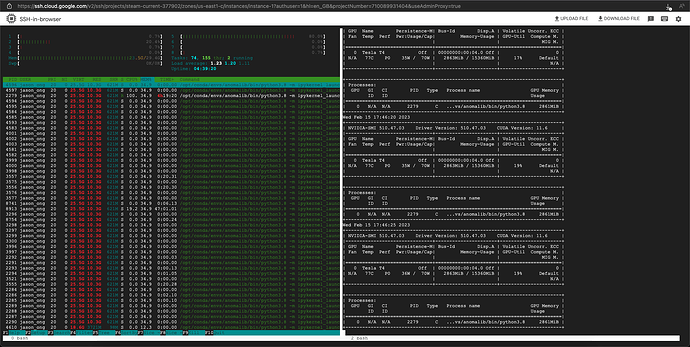

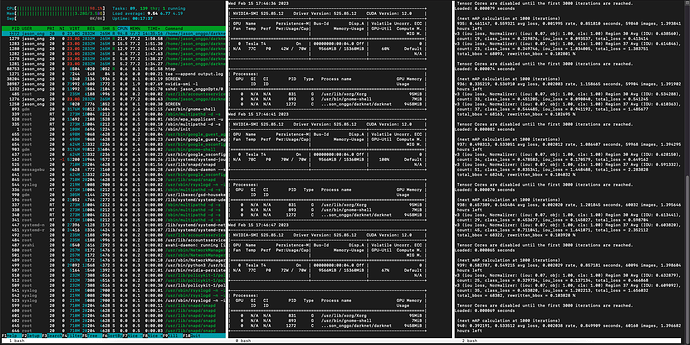

I checked the htop and nvidia-smi -l.

The problem is anomalib PyTorch underutilize GPU (2.8 GB out of 15.3 GB) while almost maxed out RAM (18.6 GB out of 29.4 GB).

My expectation was that it only use little RAM and maxed out GPU.

So, I tried to use YOLOv4 as comparison while identical data set. YOLOv4 almost maxed GPU (9.5 GB out of 15.3 GB) and can sit comfortably with 3.75 GB of RAM.

The screenshot for anomalib PyTorch