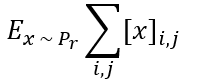

For an image, how can I calculate the below function in pytorch:

Can you explain what each variable means? It just looks like summing over a matrix and multiplying by a value P_r

x is a tensor (image), where I would like to calculate the sum of each pixel (i,j) on it and the calculate the average(mean) of the obtained sum.

So, x is of shape [N,N] or [C,N,N] where N is the width/height, and C is the number of channels?

If it’s just a matrix,

x = torch.randn(100,100) #100,100 image

answer = x.mean() #add all values then average

x is an image that can be x = torch.tensor([[[1,2],[2,3]],

[[4,5],[6,7]],

[[8,9],[10,11]]])

So how about the sum of different pixels (i,j)? because I tried torch.sum(x,(2,1)).mean() but it doesn’t work in my code

The equation above is a sum over i and j meaning to sum over all indices, not a subset.

So, try x.mean(dim=(-2,-1)) which means over the last two dims of the Tensor.

x.mean(dim=(-2,-1)), doesn’t work !

is the below equation correct, and represent the image equation ?

x = torch.sum(x)/len(x)

Can you share the error message? That should work for a Tensor of 2 dims or more.

Can you also share the shape of an example Tensor via .shape and not by printing it?

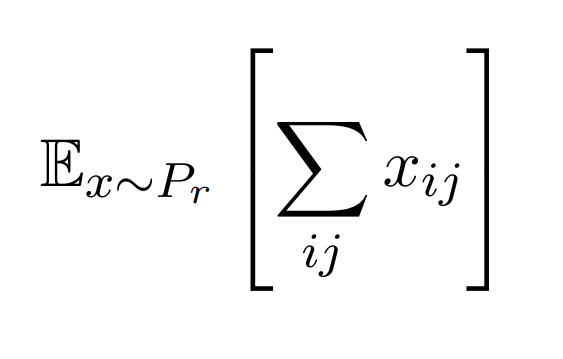

So, I’ve just read the equation again, and is the E_x meant to be a statistical expectation? Because it looks like it should be written like this,

Is this how the equation should be written?

the error message is:

IndexError: Dimension out of range (expected to be in range of [-1, 0], but got 2)

The shape of the image is: torch.Size([8, 1, 128, 128])

The equation should be like that:

E_x : is the average critic score on real images (GAN model)

The reason why I re-typed the equation in LaTeX was to clarify the exact meaning as wrapping x in []'s makes no sense (to me at least), but it makes complete sense if the []'s wrap the entire sum and you can then state the equation is the expectation of the sum of all pixels.

If you have a reference for the equation please do share.

The PyTorch code below will compute the expectation of the sum of all pixels,

x = torch.randn(8,1,128,128) #fake data

sum_over_image = x.sum(dim=(-2,-1)) #summing over i and j

#then take the expectation over all datas in batch (returns scalar as there's only 1 channel).

expectation = torch.mean(sum_over_image, dim=0) #returns tensor([13.3208])

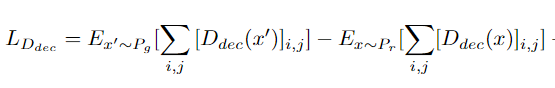

The reference of the equation of the loss function is taken from this paper: https://openaccess.thecvf.com/content_CVPR_2020/html/Schonfeld_A_U-Net_Based_Discriminator_for_Generative_Adversarial_Networks_CVPR_2020_paper.html

The function is in sub-section 3.1, that represents the discriminator loss function and in my case is represented as below:

Assuming that D(x') and D(x) are the same shape as the example above then what I wrote above should be correct. I’m not an expert on GANs but it looks like it’s as simple as just summing over the pixels and then taking its expectation over the data

x = torch.randn(8,1,128,128) #fake data

sum_over_image = x.sum(dim=(-2,-1)) #summing over i and j

#then take the expectation over all datas in batch (returns scalar as there's only 1 channel).

expectation = torch.mean(sum_over_image, dim=0) #returns tensor([13.3208])