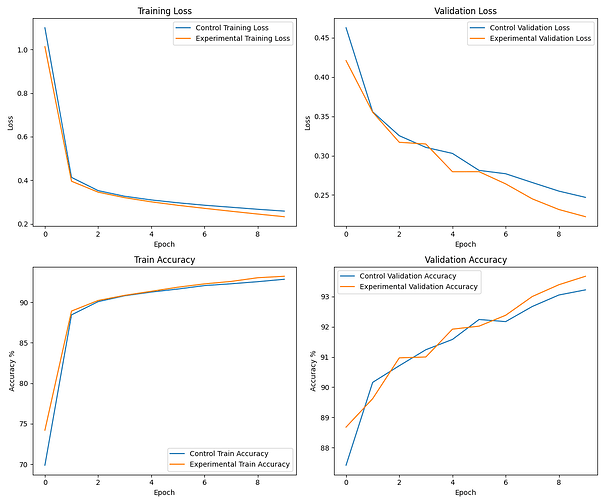

hi all, i am an amateur getting caught up with pytorch, started tinkering around and had a little idea to make the regularization adapt with respect to the loss per epoch. take these results with a grain of salt.

maybe not enough data/runs to be make any conclusions, but this was mainly an exercise in learning for me but who knows maybe this is useful to someone else.

here is a paper that confirmed my suspicions and gave me some more empirical backing (though i admit my implementation is much simpler)…

any feedback would be appreciated, thanks