I have a basic transformer model:

class model(nn.Module):

def __init__(self):

super().__init__()

self.seqLength = 5

self.d_model = 3

self.nhead = 1

self.num_encoder_layers = 6

self.num_decoder_layers = 6

self.dim_feedforward = 1024

self.dropout = 0.1

self.activation = F.relu

self.batch_first = True

self.transformer = nn.Transformer(

d_model = self.d_model,

nhead = self.nhead,

num_encoder_layers = self.num_encoder_layers,

num_decoder_layers = self.num_decoder_layers,

dim_feedforward = self.dim_feedforward,

dropout = self.dropout,

activation = self.activation,

batch_first = self.batch_first

)

def forward(self, encoderSeq, decoderSeq, decoderPaddingMask=None, decoderMask=None):

y_hat = self.transformer(src=encoderSeq, tgt=decoderSeq, tgt_mask=decoderMask, tgt_key_padding_mask=decoderPaddingMask)

return y_hat

I want to get my model to produce output with part of the decoder input sequence masked out.

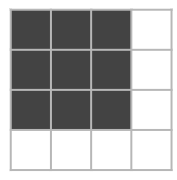

According to this site, if I want the last item in the decoder input sequence to be ignored in decoder self-attention, I need to generate a mask like this:

Where the black squares are 0 and white squares are -inf.

Code to generate the mask:

def generateDecoderMask(size, n):

output = torch.zeros((size, size))

if not n:

return output

output[:, -n:] = -torch.inf

output[-n:, :-n] = -torch.inf

return output

Problematic code:

model = model()

decoderMask = generateDecoderMask(model.seqLength, 1)

encSeq = torch.rand((1, model.seqLength, model.d_model))

decSeq = torch.rand((1, model.seqLength, model.d_model))

model(encSeq, decSeq, decoderMask=decoderMask)

The decoder mask used here is:

[[0, 0, -inf,

0, 0, -inf,

-inf, -inf, -inf]]

The output is all just nan.

The problem is present on trained and untrained models.

What I’ve tried

• Using a decoder mask like this:

[[0, 0, -inf,

0, 0, -inf,

0, 0, -inf]]

• Using tgt_key_padding_mask instead:

decoderMask = torch.tensor([0, 0, 0, 0, 1]).bool()

model(encSeq, decSeq, decoderPaddingMask=decoderMask)

Both of these alternative ways produced the same output given the same input and no nan. But the mask used in the first bullet point shouldn’t be correct, should it? The last row and column represent similarity scores for padding, what’s the use of keeping the last row?

What am I doing wrong?