Since M1 GPU support is now available (Introducing Accelerated PyTorch Training on Mac | PyTorch) I did some experiments running different models. While everything seems to work on simple examples (mnist FF, CNN, …), I am running into problems with a more complex model known as SwinIR (GitHub - JingyunLiang/SwinIR: SwinIR: Image Restoration Using Swin Transformer (official repository)). Running the code pulled from github with device=‘mps’ and the environment variable ‘PYTORCH_ENABLE_MPS_FALLBACK=1’ to fall back to the cpu in cases where mps is not supported yet, produces the wrong results:

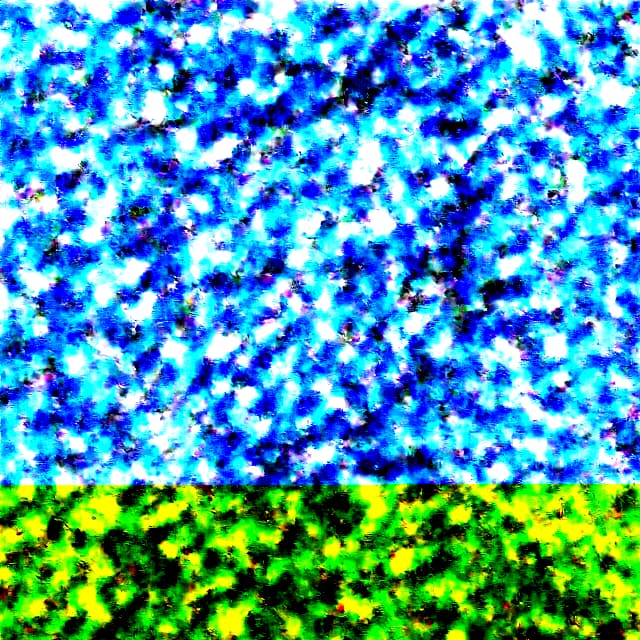

When upscaling an image of Lincoln (works correctly on cpu) it produces this instead:

Does anyone know what is going on, or what I could try to fix this?