Any help please

def training_model(model,model_type,train_dataloader,validation_dataloader,epochs):

training_loss = []

validation_loss = []

validation_acc = []

optimizer = AdamW(model.parameters(), lr=1e-3, correct_bias=True )

total_steps = len(train_dataloader) * epochs

warmup_steps = 0

scheduler = get_linear_schedule_with_warmup(optimizer, num_warmup_steps = warmup_steps,num_training_steps = total_steps)

start_time = time.time()

for epoch in range(epochs):

total_train_loss = 0

model.train()

print('Epoch: {}'.format(epoch+1))

for step, batch in enumerate(Bar(train_dataloader)):

b_input_ids = batch[0].to(device)

b_input_mask = batch[1].to(device)

b_labels = batch[2].to(device)

model.zero_grad()

loss, logits = model(b_input_ids,token_type_ids=None, attention_mask=b_input_mask,labels=b_labels) #it computes loss function so it takes labels

total_train_loss += loss.item()

loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), 1.0)

optimizer.step()

scheduler.step()

avg_train_loss = total_train_loss / len(train_dataloader)

model.eval()

save_model(model,epoch+1,model_type)

total_eval_accuracy = 0

total_eval_loss = 0

nb_eval_steps = 0

print("Validation")

for batch in validation_dataloader:

b_input_ids = batch[0].to(device)

b_input_mask = batch[1].to(device)

b_labels = batch[2].to(device)

with torch.no_grad():

(loss, logits) = model(b_input_ids,token_type_ids=None,attention_mask=b_input_mask,labels=b_labels)

total_eval_loss += loss.item()

logits = logits.detach().cpu().numpy()

label_ids = b_labels.to('cpu').numpy()

avg_val_loss = total_eval_loss / len(validation_dataloader)

print("Validation Loss :", avg_val_loss )

training_loss.append(avg_train_loss)

validation_loss.append(avg_val_loss)

elapsed_time = time.time() - start_time

print("Done")

print("Total training took :",str(datetime.timedelta(seconds=int(round((elapsed_time))))))

return model,training_loss,validation_loss

train_dataloader ,validation_dataloader = prepare_data(dataset_cbow,0.99,64) #dataset_cbow

model_embed,training_loss_embed,validation_loss_embed = training_model(model_embed,"embed",train_dataloader,validation_dataloader,epochs=5)

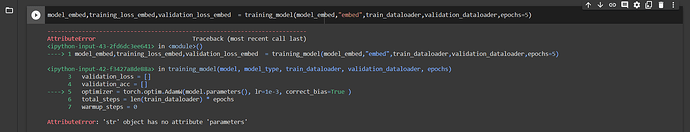

AttributeError Traceback (most recent call last)

<ipython-input-43-2fd6dc3ee641> in <module>()

----> 1 model_embed,training_loss_embed,validation_loss_embed = training_model(model_embed,"embed",train_dataloader,validation_dataloader,epochs=5)

<ipython-input-42-f3427a8de88a> in training_model(model, model_type, train_dataloader, validation_dataloader, epochs)

3 validation_loss = []

4 validation_acc = []

----> 5 optimizer = torch.optim.AdamW(model.parameters(), lr=1e-3, correct_bias=True )

6 total_steps = len(train_dataloader) * epochs

7 warmup_steps = 0

AttributeError: 'str' object has no attribute 'parameters'