Dears,

I am trying to solve a mathmatical problem through neural network.

Let’s have a fonction u whose second derivative is called f.

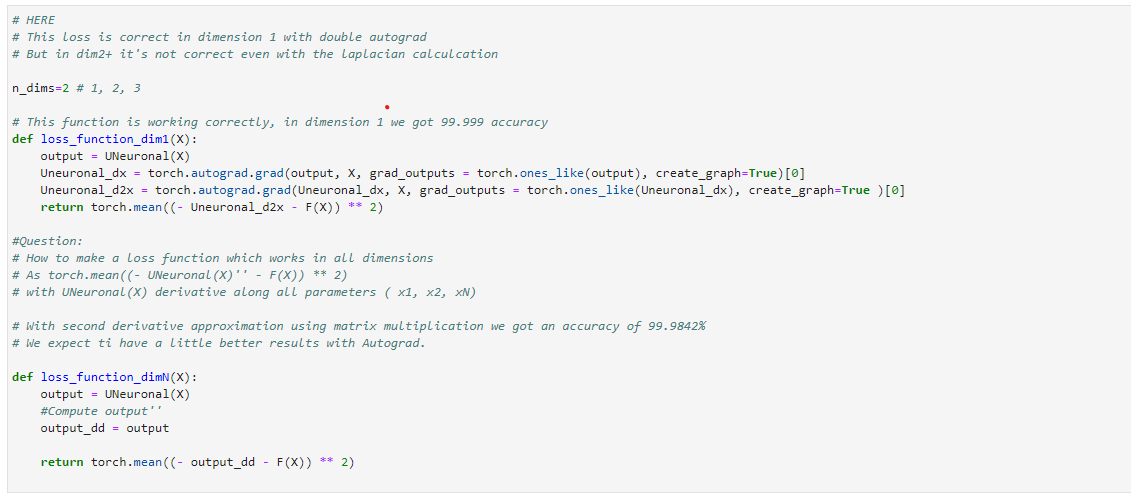

The loss function will compare the second derivative of the neuronal fonction unn (aiming at imitating u) and f.

End of the process is to have unn very near to u (we implement an adequation percentage between both curves to assess the results).

This program works fine in dim 1 (with the function autograd) but in dim 2 and in dim 3, it does not work due (probably) to the misuse of the autograd function.

In annex, I put a picture of the part of the program which does not work, and can share if need be the full program as well (.ipynb).

Thank you if anyone can help here.

Best