I don’t know why when I set the affine=True of BatchNorm2d, it will occur error : RuntimeError: cuDNN error: CUDNN_STATUS_EXECUTION_FAILED

But when I set the affine=False of BatchNorm2d , the error will not appear.

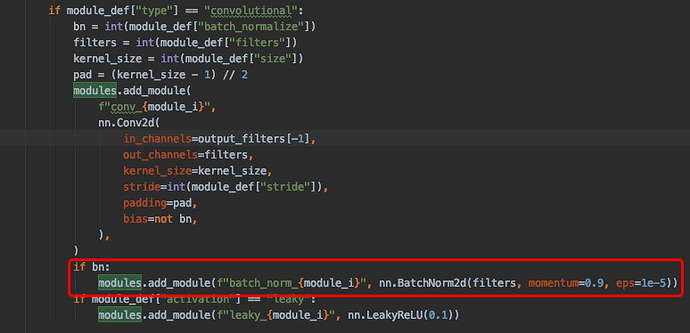

My code is here, anybody can help me ?

Which PyTorch, CUDA and cudnn version and which GPU are you using?

I cannot reproduce this error in the latest stable release.

Pytorch==1.1.0 Cuda=10.1.0, cudnn=7.6.4, Gpu = RTX2080Ti. I could use BatchNorm function in other model, but in this code coccur error.

Are you seeing this error by running the posted code screenshot?

Yes , I did …

Could you update PyTorch to the latest stable version (1.4.0) and retry the code again, please?

Thank you. I worked !

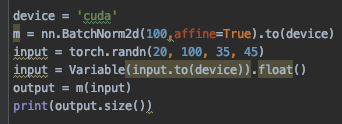

But I successfully used BatchNorm in other model using PyTorch=1.1.0. I don’t know what it happend? The codes is shown below.

Not sure what happened and I cannot remember this particular issue, but since it’s fixed in the current version, you should be good to go.

What could have been the issue, is that for your particular GPU a specific path was chosen by cudnn, which was faulty and thus raised this issue.