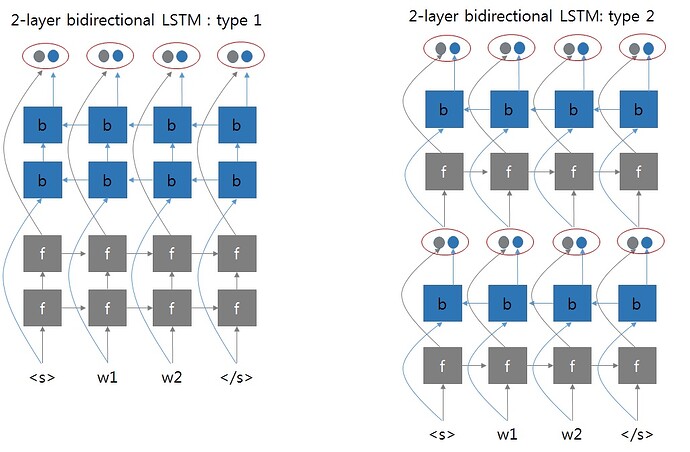

As per my understanding, GRU pytorch working as in the 2nd picture. After each layer, both forward and reverse directiona outputs are concatenated. Correct me if i was wrong.

The initial hidden state of first layer initialised with zeroes.

Is The last hidden state of 1st layer is the initial hidden state of the 2nd layer?.

And also I want to know, the second layer takes the concatenated 1st layer output as a input and operates on them, as it is operated in 1st layer? Or any operations different.

Thank you,

Dinesh