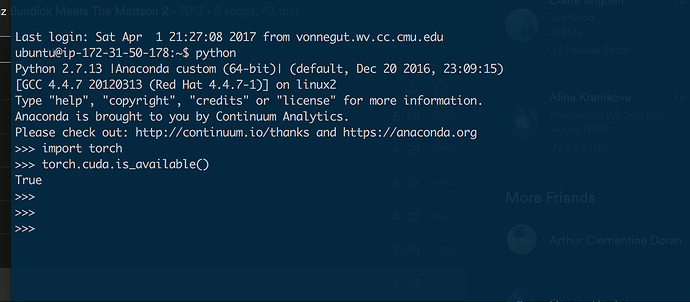

I am trying to accelerate my pytorch model training on a g2.x2 large instance with CUDA7.5 on Ubuntu 14.04. After some hassle getting it provisioned, I have hit a wall with this issue. My script hangs indefinitely just after I invoke a call to torch.cuda.is_available(). I have searched around and can’t seem to find others with the same issue. Here is a screen grab of an attempt to isolate the call in a repl on the instance.

It seems like eveything this fine after the call to torch.cuda.is_available(), but the process hangs when I attempt to leave the shell. Any help would be much appreciated.