Hello there, I am using LibTorch 2.1.0 on Windows.

And I use torch::nn::Sequential build a network and implement forward method. Then I compile code and run, and see the message that the application crashed. Do I miss something? I wonder why and how to solve it. Thanks in advance!

this the net:

class Net : public torch::nn::Module

{

private:

torch::nn::Sequential layers{nullptr};

public:

Net()

{

layers = torch::nn::Sequential();

layers->push_back(torch::nn::Linear(1, 4));

layers->push_back(torch::nn::Linear(4, 1));

}

at::Tensor forward(at::Tensor x)

{

std::cout << "CHECK ... 000" << std::endl;

x = layers->forward(x);

std::cout << "CHECK ... 001" << std::endl;

return x;

}

};

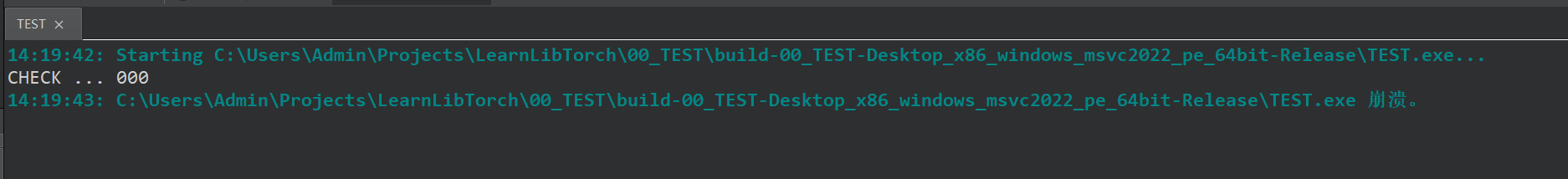

this is the error message:

You need to register your submodules (Linear Layers in your case). Refer to this for more details Using the PyTorch C++ Frontend — PyTorch Tutorials 2.1.0+cu121 documentation

Thanks for reply. But I use torch::nn::Sequential to hold submodule layers. and I find some implementations in class SequentialImpl:

In mycode, I call push_back to add submodule,

layers->push_back(module);

the difinition of this function is:

/// Adds a type-erased `AnyModule` to the `Sequential`.

void push_back(AnyModule any_module) {

push_back(c10::to_string(modules_.size()), std::move(any_module));

}

this will call the following function:

void push_back(std::string name, AnyModule any_module) {

modules_.push_back(std::move(any_module));

const auto index = modules_.size() - 1;

register_module(std::move(name), modules_[index].ptr());

}

so, I call push_back which means that submodule has been registered. but cann’t forward.

It is seems that we can’t define a variable with type torch::nn::Sequential and call forward in a class, which inherits torch::nn::Module class. I am not sure about this, but I move torch::nn::Sequential outside and build a network directly, it works and no error.

For this problem, I forgot to call register_module to register this torch::nn::Sequential variable, which I should have done.

Sorry if anyone was misled.