Hello, @jayz!

I’m not a specialist in RNNs too and also got confused when learning them.

But I think I can help you in this case ![]()

Answering you questions:

Q1: if we wanted to have just the last / most recent hidden state, we would simply slice the last column h_L of that matrix?

Q2: there is h_n, which, as I understand, is exactly this last column of the matrix?

A: For both questions, the answer is yes. For RNNs and GRUs it’s right.

Q3: do I misunderstand the whole thing?

A: No, you undestood it correctly, but it’s correct for the classic RNN and for GRU. For them, the output is also used as the hidden state.

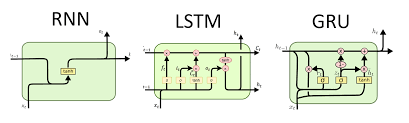

What maybe you didn’t learn yet or are not considering is that there is another type of RNN called LSTM (Long-Short Term Memory). This architecture actually computes two values, one for the output and other for hidden state.

If I’m not wrong, the classic RNN was first developed, then LSTM and finally GRU is a simplification of the LSTM.

Q4: Is this returned for convenience?

A: Yes, returned for convenience, considering different types of RNNs (classic, LSTM or GRU).

Now that you know that LSTM computes two different values for output and hidden state, Pytorch simply returns h_n in all RNNs implementations so that you can freely exchange the type of RNN without needing to change your whole code.

Q5: Furthermore, if we wanted to learn the output of the rnn cell with a different dimension than the hidden state has, we would put for instance a fully connected layer with dimension (Hout,O) “on top” of the last state of the rnn, where O is the intended size of the output? Then, if we wanted to return an entire sequence, we would apply the learned hidden-to-output function with weight matrix dimension (Hout,O) to as many past hidden states as the desired prediction sequence length?

A: In the classic RNN, it’s not possible to use different dimensions for output e hidden state, exactly because they are the same. To set different dimensions for output and hidden state, you have to use another architecture, just like LSTM.

Finally, here is an image with the details of the classic RNN, LSTM and GRU:

Hope that it helps you! ![]()

Best regards,

Rafael Macedo.