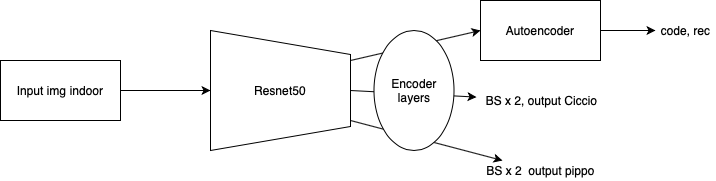

Hy guys I want to create a model like this:

I need to know if I made mistakes. Because I am not convinced of the code:

import torch.nn as nn

from torchvision.models.utils import load_state_dict_from_url

import math

# ResNet###### BLOCCHI #####################################

def conv3x3(in_planes, out_planes, stride=1):

"""convoluzione 3x3 con padding di 1"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,padding=1, bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

###############################################################

#################ENCODER CON RESNET###########################

###############################################################

class Encoder(nn.Module):

def __init__(self, block, layers):

self.inplanes = 64

super(Encoder, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) # , return_indices = True)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, 1000)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

def create_encoder(pretrained = False):

model = Encoder(Bottleneck, [3, 4, 6, 3]) #Con questi parametri è una resnet50

if pretrained:

state_dict = load_state_dict_from_url('https://download.pytorch.org/models/resnext50_32x4d-7cdf4587.pth') #URL verificato

model.load_state_dict(state_dict)

######Aggiungo layer di encoder come suggerito

model.fc = nn.Sequential(

nn.Linear(2048, 1024),

nn.ReLU(),

nn.Linear(1024, 512),

nn.ReLU(),

nn.Linear(512, 256),

nn.ReLU(),

nn.Linear(256, 128),

nn.ReLU(),

nn.Linear(128, 64),

nn.ReLU())

return model

encoder = create_encoder(True)

################USCITA ENCODER = 7x7x64

class AutoEncoder(nn.Module):

def __init__(self,):

super(AutoEncoder, self).__init__()

self.encoder = encoder

self.decoder = nn.Sequential(

nn.ConvTranspose2d(64, 64, 3),

nn.ConvTranspose2d(64, 64, 3),

nn.ReLU(),

nn.ConvTranspose2d(64, 128, 3),

nn.ConvTranspose2d(128, 128, 3),

nn.ReLU(),

nn.ConvTranspose2d(128, 256, 3),

nn.ConvTranspose2d(256, 256, 3),

nn.ReLU(),

nn.ConvTranspose2d(256, 512, 3),

nn.ConvTranspose2d(512, 512, 3),

nn.ReLU(),

nn.ConvTranspose2d(512, 1024, 3),

nn.ConvTranspose2d(1024, 3, 3))

def forward(self,x):

code = self.encoder(x)

reconstructed = self.decoder(code)

return code, reconstructed

class Ciccio(nn.Module):

def __init__(self):

super(Ciccio, self)

self.encoder = encoder

self.ciccio = nn.Sequential(nn.Linear(64, 2), nn.Relu())

def forward(self,x):

code = self.encoder(x)

ciccio = self.ciccio(code)

return ciccio

class Pippo(nn.Module):

def __init__(self):

super(Pippo, self)

self.encoder = encoder

self.pippo = nn.Sequential(nn.Linear(64, 2), nn.Relu())

def forward(self, x):

code = self.encoder(x)

pippo = self.pippo(code)

return pippo

class final_model(nn.Module):

def __init__(self):

self.AutoEncoder = AutoEncoder()

self.Ciccio = Ciccio()

self.Pippo = Pippo()

def forward(self, x):

code , rec = self.AutoEncoder(x)

Ciccio = self.Ciccio(x)

Pippo = self.Pippo(x)

#control dimension in runtime

return code, rec, Ciccio, Pippo

I edit the code of Resnet because in future I think to modify it entirely.

Sorry for my bad English ![]()