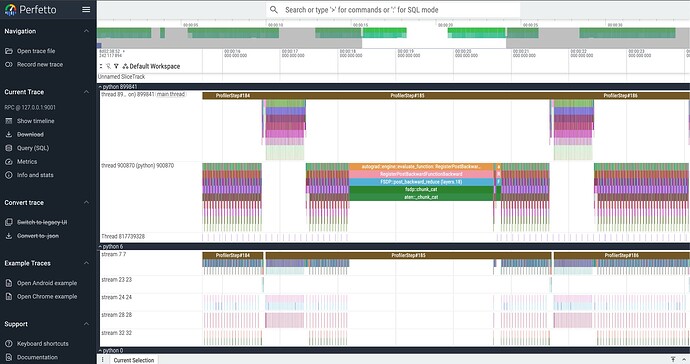

I’ve been having an issue doing llama 8b pre-training FSDP 2 with an on-prem single H200x8 bare metal instance, where I’m getting very jittery performance from inexplicably slow cpu ops that take a couple seconds before enqueuing any CUDA kernels. I’ve profiled an example of a single rank, where you can see it do be the case for aten::chunk_cat where it takes 2.5 seconds, while other instances of the aten::chunk_cat in other iterations only take like 2ms. The next highest was only 250ms. I’m really confused since it looks like none of the gpu streams are busy during this time either.

From many other profiles i’ve taken and sampled at different iterations, it doesn’t seem to be specific to this operation. I’ve ruled out any sort of data starvation issues, since this always happen in the middle of the fwd/bwd passes and my WandB metrics from torchtitan are showing only 1% time spent on dataloading. any suggestions or other things I should check would be very helpful! from what i could tell from also profiling via nsight, i couldn’t find any sort of thread synchronization issues that might also cause this.

(titan) root@se-h1-17-gpu:~/torchtitan# python -m torch.utils.collect_env

/root/miniconda3/envs/titan/lib/python3.10/runpy.py:126: RuntimeWarning: 'torch.utils.collect_env' found in sys.modules after import of package 'torch.utils', but prior to execution of 'torch.utils.collect_env'; this may result in unpredictable behaviour

warn(RuntimeWarning(msg))

Collecting environment information...

PyTorch version: 2.10.0.dev20250926+cu128

Is debug build: False

CUDA used to build PyTorch: 12.8

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.5 LTS (x86_64)

GCC version: (Ubuntu 11.4.0-1ubuntu1~22.04.2) 11.4.0

Clang version: Could not collect

CMake version: version 3.22.1

Libc version: glibc-2.35

Python version: 3.10.18 (main, Jun 5 2025, 13:14:17) [GCC 11.2.0] (64-bit runtime)

Python platform: Linux-5.15.0-151-generic-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: 12.9.86

CUDA_MODULE_LOADING set to:

GPU models and configuration:

GPU 0: NVIDIA H200

GPU 1: NVIDIA H200

GPU 2: NVIDIA H200

GPU 3: NVIDIA H200

GPU 4: NVIDIA H200

GPU 5: NVIDIA H200

GPU 6: NVIDIA H200

GPU 7: NVIDIA H200

Nvidia driver version: 575.57.08

cuDNN version: Could not collect

Is XPU available: False

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 52 bits physical, 57 bits virtual

Byte Order: Little Endian

CPU(s): 192

On-line CPU(s) list: 0-191

Vendor ID: GenuineIntel

Model name: INTEL(R) XEON(R) PLATINUM 8558

CPU family: 6

Model: 207

Thread(s) per core: 2

Core(s) per socket: 48

Socket(s): 2

Stepping: 2

CPU max MHz: 4000.0000

CPU min MHz: 800.0000

BogoMIPS: 4200.00

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc art arch_perfmon pebs bts rep_good nopl xtopology nonstop_tsc cpuid aperfmperf tsc_known_freq pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 sdbg fma cx16 xtpr pdcm pcid dca sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand lahf_lm abm 3dnowprefetch cpuid_fault epb cat_l3 cat_l2 cdp_l3 invpcid_single intel_ppin cdp_l2 ssbd mba ibrs ibpb stibp ibrs_enhanced tpr_shadow vnmi flexpriority ept vpid ept_ad fsgsbase tsc_adjust bmi1 avx2 smep bmi2 erms invpcid cqm rdt_a avx512f avx512dq rdseed adx smap avx512ifma clflushopt clwb intel_pt avx512cd sha_ni avx512bw avx512vl xsaveopt xsavec xgetbv1 xsaves cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local split_lock_detect avx_vnni avx512_bf16 wbnoinvd dtherm ida arat pln pts hwp hwp_act_window hwp_epp hwp_pkg_req avx512vbmi umip pku ospke waitpkg avx512_vbmi2 gfni vaes vpclmulqdq avx512_vnni avx512_bitalg tme avx512_vpopcntdq la57 rdpid bus_lock_detect cldemote movdiri movdir64b enqcmd fsrm md_clear serialize tsxldtrk pconfig arch_lbr amx_bf16 avx512_fp16 amx_tile amx_int8 flush_l1d arch_capabilities

Virtualization: VT-x

L1d cache: 4.5 MiB (96 instances)

L1i cache: 3 MiB (96 instances)

L2 cache: 192 MiB (96 instances)

L3 cache: 520 MiB (2 instances)

NUMA node(s): 4

NUMA node0 CPU(s): 0-23,96-119

NUMA node1 CPU(s): 24-47,120-143

NUMA node2 CPU(s): 48-71,144-167

NUMA node3 CPU(s): 72-95,168-191

Vulnerability Gather data sampling: Not affected

Vulnerability Indirect target selection: Not affected

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Reg file data sampling: Not affected

Vulnerability Retbleed: Not affected

Vulnerability Spec rstack overflow: Not affected

Vulnerability Spec store bypass: Vulnerable

Vulnerability Spectre v1: Vulnerable: __user pointer sanitization and usercopy barriers only; no swapgs barriers

Vulnerability Spectre v2: Vulnerable; IBPB: disabled; STIBP: disabled; PBRSB-eIBRS: Vulnerable; BHI: Vulnerable

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] numpy==2.2.6

[pip3] nvidia-cublas-cu12==12.8.4.1

[pip3] nvidia-cuda-cupti-cu12==12.8.90

[pip3] nvidia-cuda-nvrtc-cu12==12.8.93

[pip3] nvidia-cuda-runtime-cu12==12.8.90

[pip3] nvidia-cudnn-cu12==9.10.2.21

[pip3] nvidia-cufft-cu12==11.3.3.83

[pip3] nvidia-curand-cu12==10.3.9.90

[pip3] nvidia-cusolver-cu12==11.7.3.90

[pip3] nvidia-cusparse-cu12==12.5.8.93

[pip3] nvidia-cusparselt-cu12==0.7.1

[pip3] nvidia-nccl-cu12==2.27.5

[pip3] nvidia-nvjitlink-cu12==12.8.93

[pip3] nvidia-nvtx-cu12==12.8.90

[pip3] pytorch-triton==3.5.0+gitbbb06c03

[pip3] torch==2.10.0.dev20250926+cu128

[pip3] torchdata==0.11.0

[pip3] torchtitan==0.1.0.dev20250926+cu128

[conda] numpy 2.2.6 pypi_0 pypi

[conda] nvidia-cublas-cu12 12.8.4.1 pypi_0 pypi

[conda] nvidia-cuda-cupti-cu12 12.8.90 pypi_0 pypi

[conda] nvidia-cuda-nvrtc-cu12 12.8.93 pypi_0 pypi

[conda] nvidia-cuda-runtime-cu12 12.8.90 pypi_0 pypi

[conda] nvidia-cudnn-cu12 9.10.2.21 pypi_0 pypi

[conda] nvidia-cufft-cu12 11.3.3.83 pypi_0 pypi

[conda] nvidia-curand-cu12 10.3.9.90 pypi_0 pypi

[conda] nvidia-cusolver-cu12 11.7.3.90 pypi_0 pypi

[conda] nvidia-cusparse-cu12 12.5.8.93 pypi_0 pypi

[conda] nvidia-cusparselt-cu12 0.7.1 pypi_0 pypi

[conda] nvidia-nccl-cu12 2.27.5 pypi_0 pypi

[conda] nvidia-nvjitlink-cu12 12.8.93 pypi_0 pypi

[conda] nvidia-nvtx-cu12 12.8.90 pypi_0 pypi

[conda] pytorch-triton 3.5.0+gitbbb06c03 pypi_0 pypi

[conda] torch 2.10.0.dev20250926+cu128 pypi_0 pypi

[conda] torchdata 0.11.0 pypi_0 pypi

[conda] torchtitan 0.1.0.dev20250926+cu128 pypi_0 pypi

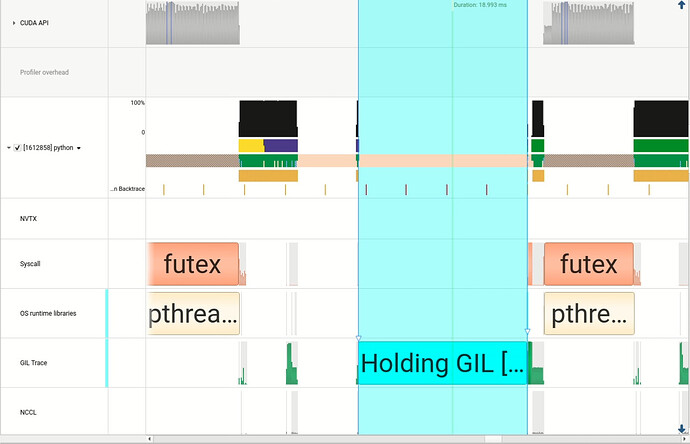

did another profiling pass this time with NVIDIA nsys, and it looks like the active thread gets descheduled (yellow/navy blue) for a few seconds from the CPU before becoming active again (green). Could this be a kernel/OS setting?