Hi,

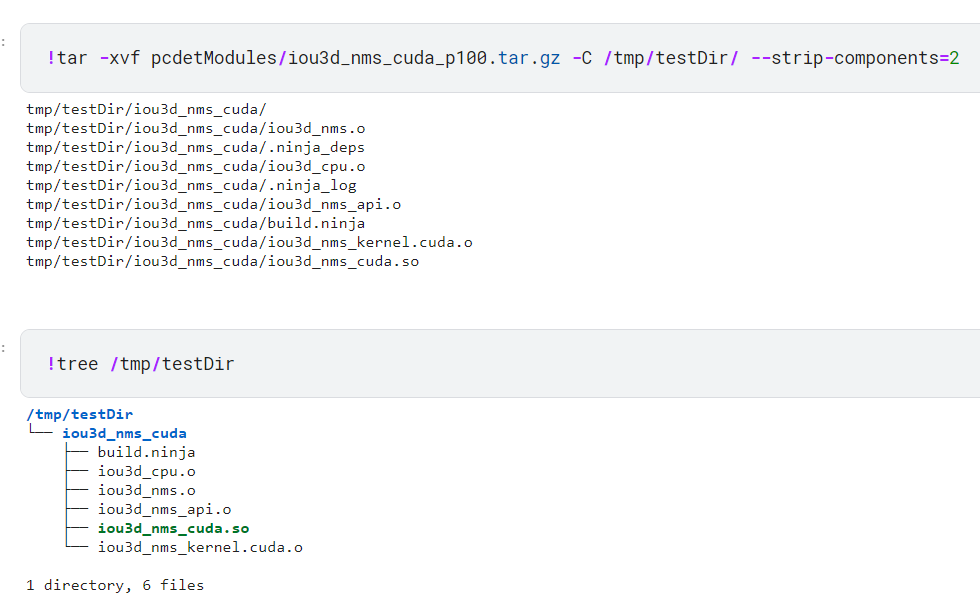

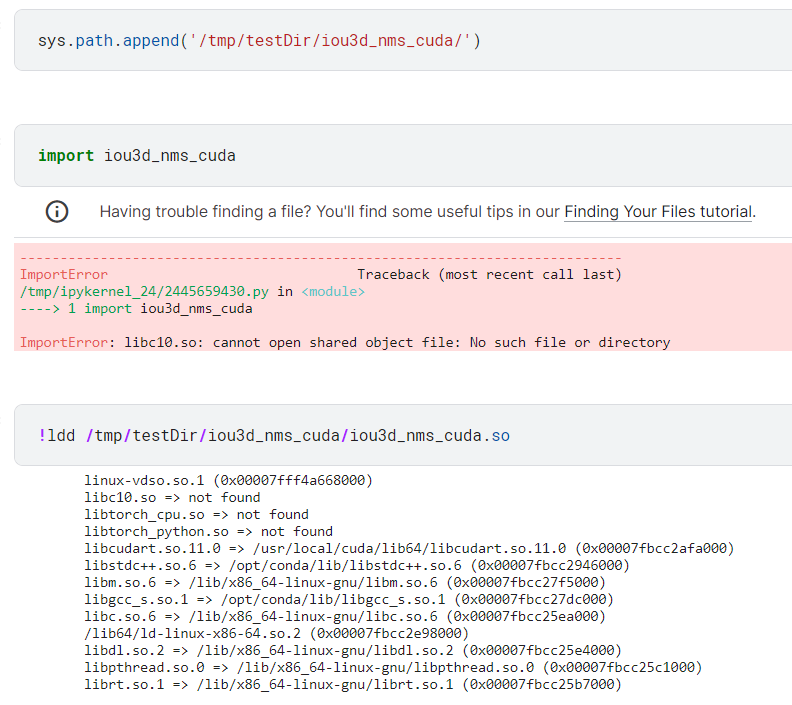

I’m trying to compile CUDA Extension from set of .cu and .cpp files in Kaggle environment. I followed this tutorial and was able to load as python module. I want to save the built module to a file and be able to load it in another instance (same Notebook different time / different notebook), so that I don’t need to build the module every time. The HW will be the same in all scenarios (P100).

I tried using giving the is_python_module=False, is_standalone=True arguments in cpp_extension.load() function , assuming it’d give an executable which can be reused. Is my understanding of the argument correct?

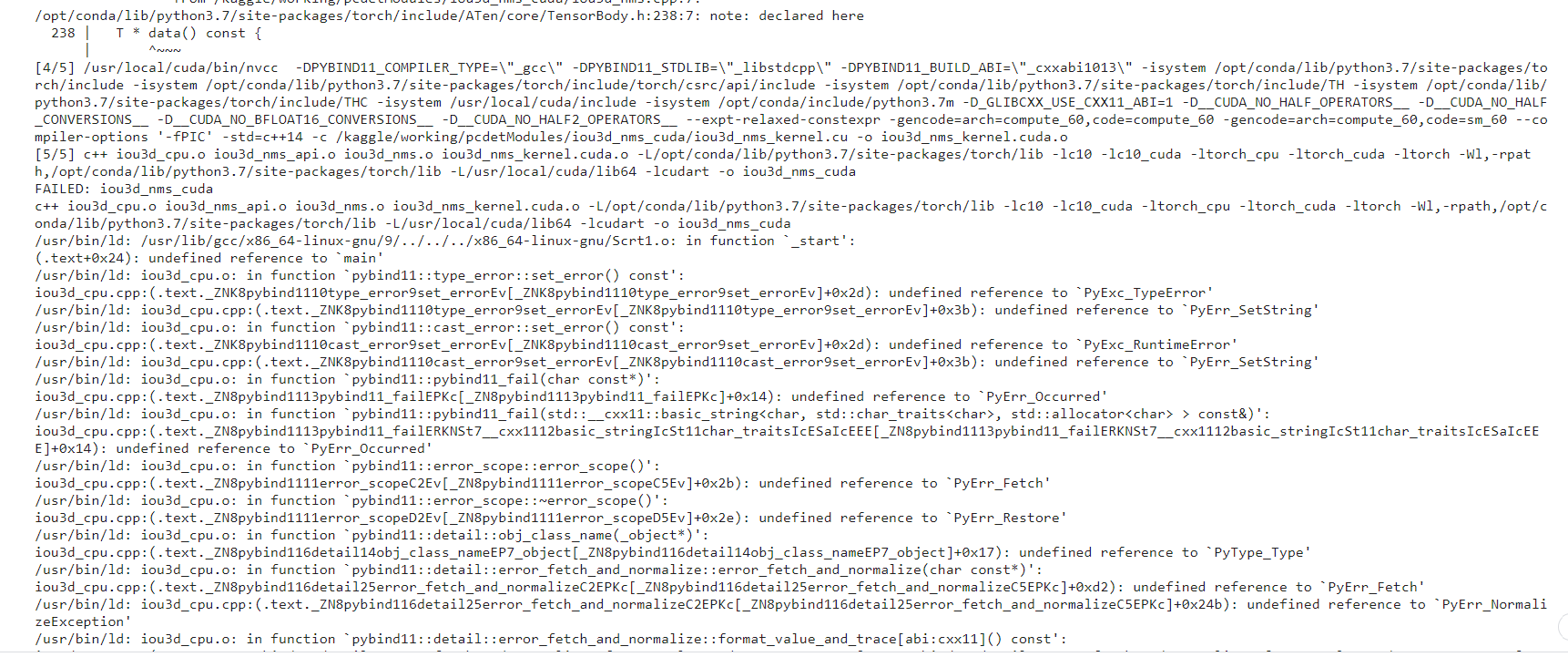

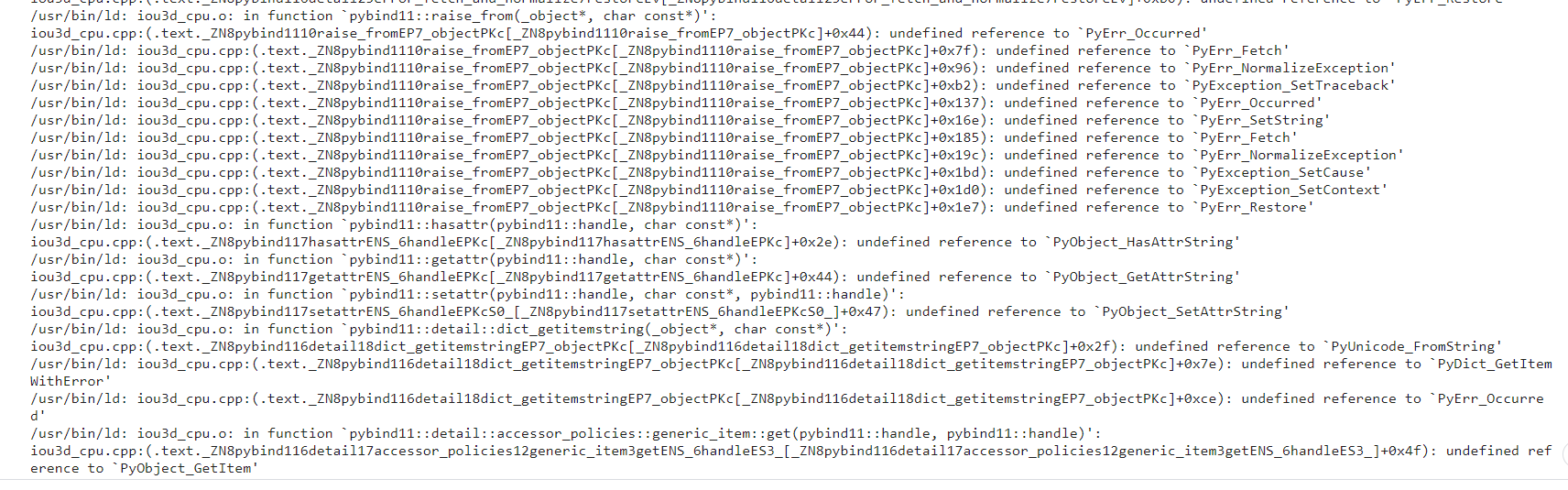

Another thing, I get a bunch of undefined reference errors and so the build fails

Is there any other source file / argument that I need to set to build an executable that can be save to file and later imported as Python module in some other environment (same platform)?

I tried referring to this post, but unable to use the build_directory option

It’d very helpful if someone can help me out on this