Hello,

I was working with a NN that takes patches of size 32x32x32. The test image sizes are 172x220x156.

How do I get non-overlapping patches and add the output patches to get the whole image together.

I have tried hardcoding with loops and indexing but is there any other way to do so?

Also looked in torch.unfold option too… but not sure how to implement that in my case.

In your last topic, I’ve posted a small example, how to get non-overlapping patches: link.

Have a look at this post to see, how these patches can be transformed into the original input tensor.

Note, that your current input shape it not divisible without a remainder by 32, so you might want to add padding to the input before unfolding.

Hi @ptrblck

I have followed the code for reconstructing the estimated image.

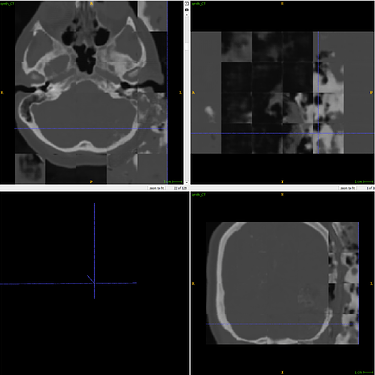

As you can see, some parts are quite fine whereas some are pretty bad and mostly the patches can be visible like mosaics.

Is there a way might make the patches smoother to form the image.

TIA

Did you run the last equality check in the linked code and if so, was it successful?

Hi @ptrblck

How do I run the equality test in my case?

The output from network is of size output = np.array(output) #(12,10,32,32,32)

and the final image size after the code is (1,160,192,128)

The example you gave takes an image, breaks it down and then reconstructs it back.

In my case the image shape changes in the test_loader from (1,172,220,156) to (10,1,32,32,32)

Please see the codes below for detail(I mentioned the shapes as comments)

for i in filenames_test: #filenames_test consists a single 172,220,156 shaped image

path_mr = os.path.join(dir_test, 'MR', i)

MR = nib.load(path_mr).get_data()

path_ct = os.path.join(dir_test, 'CT', i)

CT = nib.load(path_ct).get_data()

X = get_nonoverlapping_patch(MR,patch_size) # torch.Size([120,32,32,32])

y = get_nonoverlapping_patch(CT,patch_size)

#get_nonoverlapping_patch creates the patches, before the reshape section

patches_MR.append(X)

patches_CT.append(y)

print(i,X.shape,y.shape) #(120, 32, 32, 32)

test_dataset = Dataset(patches_MR, patches_CT)

test_loader = data.DataLoader(test_dataset, batch_size=10, shuffle=False)

for i,sample in enumerate(test_loader):

with torch.no_grad():

mr = sample[0].float().to(device)

ct = sample[1].float().to(device)

pred = model(mr)

CT_synth = pred.detach().cpu().numpy().squeeze(1)

CT_sq = ct.detach().cpu().numpy().squeeze(1)

output.append(CT_synth)

"""Now recontruct the output"""

output = np.array(output) #(12,10,32,32,32)

output = output.reshape(-1,32,32,32) #(120,32,32,32)

output = torch.tensor(output)

output = output.unsqueeze(0) #(1,120,32,32,32)

unfold_shape = [1, 172 // 32, 220 // 32, 156 // 32, 32, 32, 32]

output_c = unfold_shape[1] * unfold_shape[4] #corresponding number of blocks x patch dimension

output_h = unfold_shape[2] * unfold_shape[5]

output_w = unfold_shape[3] * unfold_shape[6]

Orig = output.view(unfold_shape) # torch.Size([1, 5, 6, 4, 32, 32, 32])

Orig1 = Orig.permute(0, 1, 4, 2, 5, 3, 6).contiguous() #torch.Size([1, 5, 32, 6, 32, 4, 32])

Orig2 = Orig1.view(1, output_c, output_h, output_w) #torch.Size([1, 160, 192, 128])

Orig3 = Orig2.squeeze(0) #torch.Size([160, 192, 128])

Orig4 = np.array(Orig3) #numpy.ndarray

The error might come from the fact, that you cannot divide the input into 32-sized patches.

Try to pad the input before unfolding it:

x = torch.randn(1, 172, 220, 156)

kc, kh, kw = 32, 32, 32 # kernel size

dc, dh, dw = 32, 32, 32 # stride

# Pad to multiples of 32

x = F.pad(x, (x.size(2)%kw // 2, x.size(2)%kw // 2,

x.size(1)%kh // 2, x.size(1)%kh // 2,

x.size(0)%kc // 2, x.size(0)%kc // 2))

patches = x.unfold(1, kc, dc).unfold(2, kh, dh).unfold(3, kw, dw)

unfold_shape = patches.size()

patches = patches.contiguous().view(-1, kc, kh, kw)

print(patches.shape)

# Reshape back

patches_orig = patches.view(unfold_shape)

output_c = unfold_shape[1] * unfold_shape[4]

output_h = unfold_shape[2] * unfold_shape[5]

output_w = unfold_shape[3] * unfold_shape[6]

patches_orig = patches_orig.permute(0, 1, 4, 2, 5, 3, 6).contiguous()

patches_orig = patches_orig.view(1, output_c, output_h, output_w)

# Check for equality

print((patches_orig == x[:, :output_c, :output_h, :output_w]).all())

> tensor(1, dtype=torch.uint8)

Hi @ptrblck

I performed the equality test on a CT image by padding, creating non-overlapping patches and reconstructing it back. The patches were correctly located.

I did the following:

- Padded the MR and CT images to the size of multiples of 32.

- Took the non-overlapping patches and created the test dataset.

- From the output reconstructed the image by connecting the patches.

But the same mismatches are found:

What might be the reason, am I not training the network enough? or shall I try overlapping patches and do intensity averaging in overlapping regions?

Oh, wait. The posted image is the result of your model, not the reconstructed pictures using the patches?

If so, I misunderstood this particular issue.

That would be a reasonable approach. The original UNet paper also used overlapping windows with good segmentation results. This is of course different to your approach, but I would assume it should smooth the image better.

Yes, these are the output of the network.

Sorry if I was not clear to you before. If I have to use overlapping patches and intensity averaging, do you have any source of such code?

Unfortunately not. While the original UNet paper used a sliding window approach, they have created segmentation masks, which is quite different to your MR output.

@ptrblck : Hi Sir,

Can you please share the code snipped for extracting random patches from 3D MRI Images using unfold option. Also, how can we view this patches and construct an image back from these patches.

It would be very helpful. I have seen multiple examples but i am not getting exact solution like while viewing everything becomes noisy thats why could you please help.

Thanks

Nitika

Hi I think the code you are looking for is shared above here: Creating nonoverlapping patches from 3D data and reshape them back to the image - #6 by ptrblck . It has both unfold and reshape options.

To view the patches in a software use ITKsnap or FSL. Or if you want to visualize in notebook, use itkwidgets and there should be a bunch of other visualization open-sourced software. If the image is same then you shouldn’t get any noisy/tiled reshape as I had. But I was using output of the network with non-overlap training anyway.

Dear Sir,

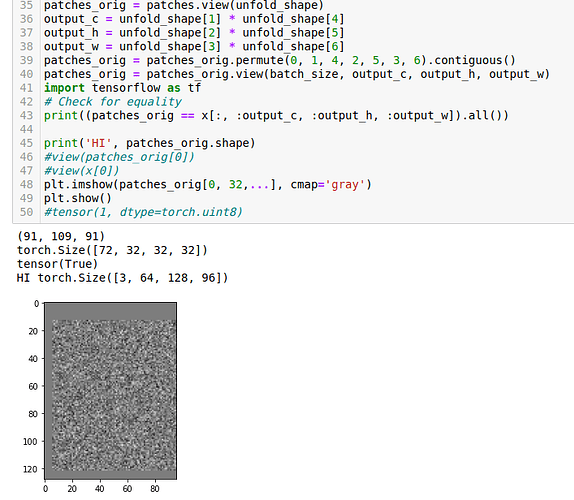

This is my code

import torch

import nibabel as nib

import matplotlib.pyplot as plt

tmap_img = nib.load(‘/home/nitika/PHD/rough/Image1.nii.gz’)

print(tmap_img.shape)

plt.imshow(tmap_img.get_fdata()[10])

S = tmap_img.shape[0] # channel dim

W = tmap_img.shape[1] # width

H = tmap_img.shape[2] # height

batch_size = 1

x = torch.randn(batch_size, S, W, H)

kc, kh, kw = 32, 32, 32 # kernel size

dc, dh, dw = 32, 32, 32 # stride

Pad to multiples of 32

x = F.pad(x, (x.size(2)%kw // 2, x.size(2)%kw // 2,

x.size(1)%kh // 2, x.size(1)%kh // 2,

x.size(0)%kc // 2, x.size(0)%kc // 2))

patches = x.unfold(1, kc, dc).unfold(2, kh, dh).unfold(3, kw, dw)

unfold_shape = patches.size()

patches = patches.contiguous().view(-1, kc, kh, kw)

print(patches.shape)

Reshape back

patches_orig = patches.view(unfold_shape)

output_c = unfold_shape[1] * unfold_shape[4]

output_h = unfold_shape[2] * unfold_shape[5]

output_w = unfold_shape[3] * unfold_shape[6]

patches_orig = patches_orig.permute(0, 1, 4, 2, 5, 3, 6).contiguous()

patches_orig = patches_orig.view(1, output_c, output_h, output_w)

import tensorflow as tf

Check for equality

print((patches_orig == x[:, :output_c, :output_h, :output_w]).all())

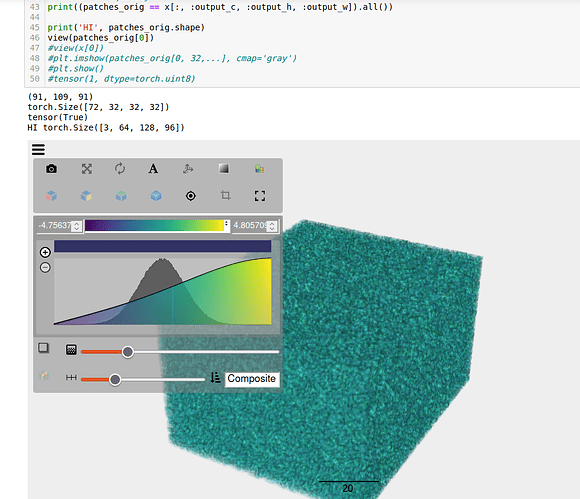

print(‘HI’, patches_orig.shape)

#============PLease help here how to view this original image reconstructed in python notebook====

#plt.imshow(patches_orig) ==> THrows an error ypeError: Invalid shape (1, 64, 128, 96) for image data

#tensor(1, dtype=torch.uint8)

and this is output

(91, 109, 91)

torch.Size([24, 32, 32, 32])

tensor(True)

HI torch.Size([1, 64, 128, 96])

also one image is displayed

Can you please help in last section of verifying original image

#============PLease help here how to view this original image reconstructed in python notebook====

#plt.imshow(patches_orig) ==> THrows an error ypeError: Invalid shape (1, 64, 128, 96) for image data

Also how can we send these patches directly to 3D -CNN.

It would be very helpful if you can guide please.

You can’t visualize more than 3 channels by matplotlib imshow here channel is 64. But you can visualize 2D slices to check the image.

plt.imshow(patches_orig[0, 32,...], cmap='gray')

plt.show()

Or if you are using notebook IDE, follow this tutorial to visualize 3D images.

After that, you have to create Dataset and DataLoader to make the images iterable, convert to tensor, and other augmentations/transformations to make it as following structure:

Batch_size, modality, H, W, D

If you are using only MR or T1 the modality should be 1.

Check out PyTorch and other tutorials for these basic things.

Sending the Dataloader sample patches to 3D CNN depends on what kind of CNN or network architecture you will be using and also what your application is.

For example, in the case of 3D UNet, the image sizes have to be divisible by 2 and have to be 4/5 magnitude multiples of 2 considering maxpool operation.

For example, in 2D UNet 224, 224 is a preferred dimension since both dimensions are divisible by 2: 224>112>64>32>16 …

Once you have the network chosen, you can easily feed the images as following:

input, target = next(iter(Dataloader)) # taking one sample

model = Chosen_CNN(**args)

output = model(input)

Also, I didn’t mention loss criteria, optimizers, and metric stuff that comes into the broad context.

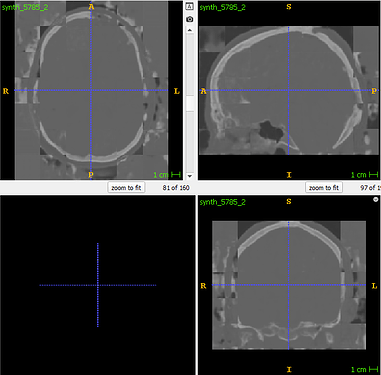

Sir… after using itkwidgets view method i am getting this image but it is not MRI Image.

Both view(patches_orig[0]) and view(x[0]) returns the same image.

Can you please guide on this.

Also i have doubt that for every MRI Image we are just passing its dimensions in unfold function, how will it make difference for multiple MRI Image and multiple classes.

Can you share the sample data you are visualizing in ITKwidgets. I am not an expert in ITKwidgets but I can visualize in other open source software and provide you feedback.

Have you tried plotting a single plane/slice as I had mentioned?

Yes with that also same type of image is coming.

I am attaching the output as well as Python code file.

This text will be hidden

import torch

import nibabel as nib

import matplotlib.pyplot as plt

from itkwidgets import view

from IPython.display import display

import ipyvolume as ipv

import torch.nn.functional as F

import itk

tmap_img = nib.load(‘/home/nitika/PHD/rough/Image1.nii.gz’)

print(tmap_img.shape)

#plt.imshow(tmap_img.get_fdata()[10])

S = tmap_img.shape[0] # channel dim

W = tmap_img.shape[1] # width

H = tmap_img.shape[2] # height

batch_size = 3

x = torch.randn(batch_size, S, W, H)

kc, kh, kw = 32, 32, 32 # kernel size

dc, dh, dw = 32, 32, 32 # stride

Pad to multiples of 32

x = F.pad(x, (x.size(2)%kw // 2, x.size(2)%kw // 2,

x.size(1)%kh // 2, x.size(1)%kh // 2,

x.size(0)%kc // 2, x.size(0)%kc // 2))

patches = x.unfold(1, kc, dc).unfold(2, kh, dh).unfold(3, kw, dw)

unfold_shape = patches.size()

view(unfold_shape)

patches = patches.contiguous().view(-1, kc, kh, kw)

print(patches.shape)

Reshape back

patches_orig = patches.view(unfold_shape)

output_c = unfold_shape[1] * unfold_shape[4]

output_h = unfold_shape[2] * unfold_shape[5]

output_w = unfold_shape[3] * unfold_shape[6]

patches_orig = patches_orig.permute(0, 1, 4, 2, 5, 3, 6).contiguous()

patches_orig = patches_orig.view(batch_size, output_c, output_h, output_w)

import tensorflow as tf

Check for equality

print((patches_orig == x[:, :output_c, :output_h, :output_w]).all())

print(‘HI’, patches_orig.shape)

#view(patches_orig[0])

#view(x[0])

plt.imshow(patches_orig[0, 32,…], cmap=‘gray’)

plt.show()

#tensor(1, dtype=torch.uint8)

Hi, check the notebook here: DebugTorch/Create_3D_patches_unfold.ipynb at master · ranabanik/DebugTorch · GitHub

It is nothing new from what ptrblck suggested, I just tried to organize codes and visualize.

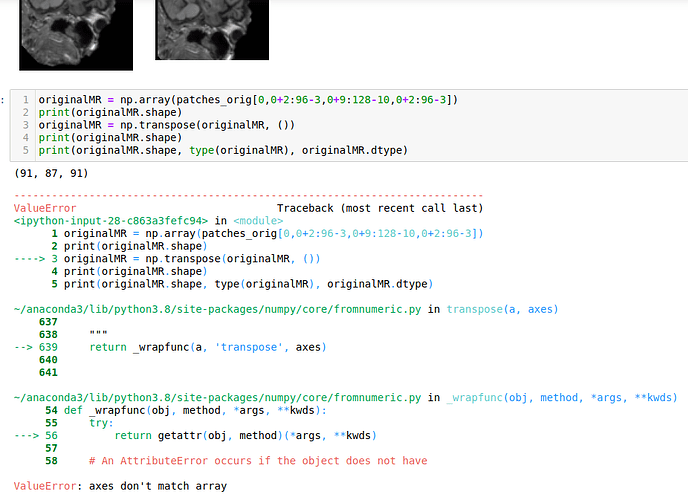

Hi Sir… Most of the code ran properly except the last part.

Can you please tell whats the importance of this part : 0,0+2:96-3,0+9:128-10,0+2:96-3]

originalMR = np.array(patches_orig[0,0+2:96-3,0+9:128-10,0+2:96-3])

originalMR = np.transpose(originalMR, ())

print(originalMR.shape, type(originalMR), originalMR.dtype)

since its returning error and not matching with original shape.

To crop out the original size MR from padded dimensions.

Comment out this line any transpose operation in the code. Should be fine.

Or check the git link, I have updated.