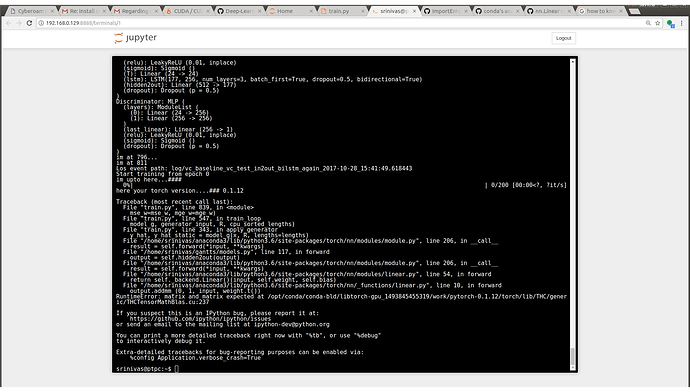

Trying to run an lstm batch on a local GPU and I’m getting the following typical error:

/home/xxx/.conda/envs/torchenv/lib/python3.6/site-packages/torch/backends/cudnn/__init__.py:40: UserWarning: PyTorch was compiled without cuDNN support. To use cuDNN, rebuild PyTorch making sure the library is visible to the build system. "PyTorch was compiled without cuDNN support. To use cuDNN, rebuild "

These are the relevant packages in my environment:

(torchenv) [~/git/nn]$ conda list

cuda80 1.0 0 soumith

cudatoolkit 7.5 2

cudnn 6.0.21 cuda7.5_0

pytorch 0.1.12 py36cuda7.5cudnn6.0_1

In [2]: torch.backends.cudnn.is_acceptable(torch.cuda.FloatTensor(1))

`False’

In [3]: print(torch.backends.cudnn.version())

None

In [4]: print(torch.cuda.is_available())

True

So CUDA and CUDNN are available, but pytorch was built against a previous version than the one in my system. A few beginner questions that I can’t find answers for in other questions here:

-

Am I seeing the “compiled without cuDNN support” errors because of version mismatch between build and visible versions?

-

If so, what is the best way to solve this? Should I uninstall pytorch and build from source? Or is there an organic way to do this using conda?

-

What are some good practices to keep a local node / cluster up to date and avoid these mismatches?

Using Linux 4.12.4-1-ARCH fwiw.

Thanks!