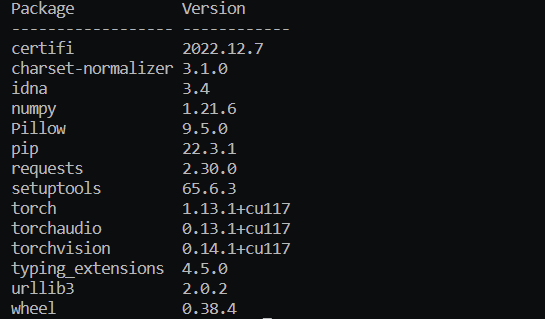

I’m using cuda in a docker machine with 2 Nvidia V100 GPUs, my cuda installation is torch==1.13.1+cu117, previously this version works fine, but recently I installed this version in a new docker but torch.cuda.is_available is false. Here are some basic information about the machine and

pytorch installation status:

torch 1.13.1+cu117

torchaudio 0.13.1

torchvision 0.14.1

nvidia-smi result:

Fri May 19 16:01:30 2023

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 470.82.01 Driver Version: 470.82.01 CUDA Version: ERR! |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla V100-SXM2... On | 00000000:3D:00.0 Off | 0 |

| N/A 33C P0 41W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 1 Tesla V100-SXM2... On | 00000000:3E:00.0 Off | 0 |

| N/A 30C P0 42W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2018 NVIDIA Corporation

Built on Sat_Aug_25_21:08:01_CDT_2018

Cuda compilation tools, release 10.0, V10.0.130

Note that nvidia-smi shows ERR! for CUDA Version. Maybe it’s the root cause of this problem?