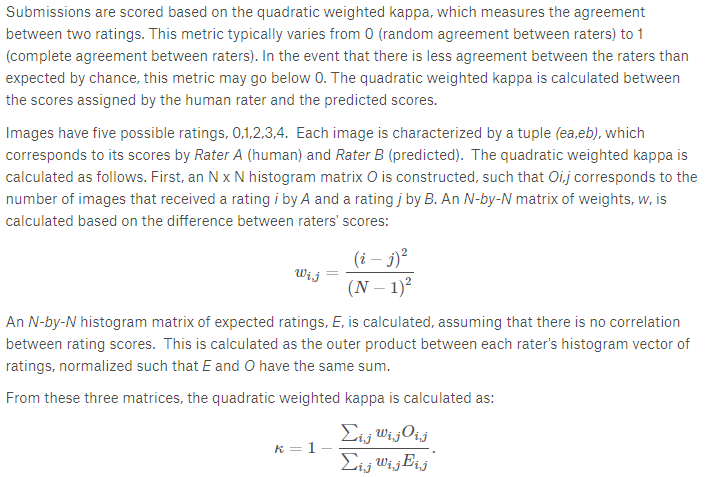

Hello all, getting used to PyTorch. Trying to figure out how to do custom loss functions that are a bit more complicated than MSE. For instance, the quadratic weighted kappa score that was used a few years back in this old Kaggle competition: Diabetic Retinopathy Detection | Kaggle.

Thanks!!!

Have a look here, where someone implemented a soft (differentiable) version of the quadratic weighted kappa in XGBoost.

In general, for backprop optimization, you need a loss function that is differentiable, so that you can compute gradients and update the weights in the model. This is why the raw function itself cannot be used directly.

1 Like